Dr Di Fu

About

Biography

Dr. Di Fu (Chinese name: 傅迪 or 付迪, Di pronounces as "Dee", which means "enlightenment and inspiration" in Chinese; she guesses this is why she is so enthusiastic about human minds and intelligence) is an assistant professor (UK Lecturer) at the Department of Psychology, University of Surrey. She is the director of the Digital Intelligence for Future Users (DIFU) Lab. Her research group focuses on crossmodal learning and human-robot social interaction.

Before that, she worked as a postdoctoral research associate at the Department of Informatics, University of Hamburg. She got her fast-tracking Ph.D degree in cognitive neuroscience at the Institute of Psychology, Chinese Academy of Sciences, in 2020.

She had been honored as an outstanding graduate of CAS and an outstanding doctoral graduate of Beijing. She has been awarded the Kavli Summer Institute in Cognitive Neuroscience fellowship, the International Postdoctoral Exchange fellowship, the CAS-DAAD joint doctoral student fellowship, and the Chinese National Academic Scholarship.

Her work has been published in the International Journal of Social Robotics, Public Administration Review, NeuroImage, iScience, IEEE IROS, ACM/IEEE HRI, IEEE RO-MAN, IEEE IJCNN, etc.

She also serves on the committees of the Chinese Association for Psychological & Brain Sciences and the Chinese German Association for Biology and Medicine.

Areas of specialism

University roles and responsibilities

- Director of Digital Intelligence for Future Users Lab

- Prgramme Co-leader for MSc Game Design and Digital Innovation

- Social Media Lead for the School of Psychology

Human Impression of Humanoid Robots Mirroring Social Cues

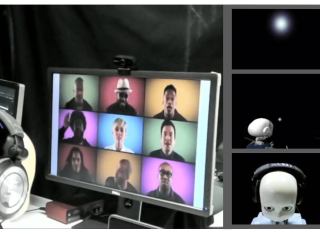

Mirroring non-verbal social cues such as affect or movement can enhance human-human and human-robot interactions in the real world. The robotic platforms and control methods also impact people's perception of human-robot interaction. However, limited studies have compared robot imitation across different platforms and control methods. Our research addresses this gap by conducting two experiments comparing people's perception of affective mirroring between the iCub and Pepper robots and movement mirroring between vision-based iCub control and Inertial Measurement Unit (IMU)-based iCub control. We discovered that the iCub robot was perceived as more humanlike than the Pepper robot when mirroring affect. A vision-based controlled iCub outperformed the IMU-based controlled one in the movement mirroring task. Our findings suggest that different robotic platforms impact people's perception of robots' mirroring during HRI. The control method also contributes to the robot's mirroring performance. Our work sheds light on the design and application of different humanoid robots in the real world.

Supervision

Completed postgraduate research projects I have supervised

- Ziwei Chen (Phd), Social Attention Mechanisms under Face Pareidolia Process, Institute of Psychology, Chinese Academy of Sciences

- Eric Bergter (MSc), Human Navigation Driven: Modeling Visual Localization with Cognitive Graphs and Local Scenes, Department of Informatics, University of Hamburg

- Maximilian Keiff (MSc), Social Attention Prediction in A Free-viewing Eye Tracking Task, Department of Informatics, University of Hamburg

- Navneet Singh Arora (MSc), Multimodal Representational Learning for Dimensional Emotion Recognition, Department of Informatics, University of Hamburg

Teaching

- PSYM034 MSc Dissertation (Module Lead and Dissertation Supervisor)

- PSY3065 UG Dissertation (Dissertation Supervisor)

- PSYM155 Psychology and Game Design II (Module Lead)

Publications

Interactive artificial intelligence (AI) is rapidly reshaping human-centered robotics by moving beyond algorithmic efficiency toward real-time adaptability, transparency, and design for people and contexts. Building on the inaugural InterAI Workshop at IEEE RO-MAN 2024, this second edi- tion, proposed for HRI 2026, will convene the robotics and HRI communities to examine how interactive AI can en- able robust, trustworthy systems that operate seamlessly in dynamic, real-world environments. The half-day hybrid program will feature two keynote talks, and a peer-reviewed paper oral presentation session. Distinct from prior events, the workshop spotlights the integration of generative and embodied AI with human-in-the-loop learning and real-time decision making, as well as methods for explainability, eval- uation, and safety. The workshop aims to catalyze a collabo- rative agenda that bridges interactive AI technologies and human-centered robotic systems.

Adolescent loneliness is a growing concern in digitally mediated social environments. This work-in-progress presents a youth-authored critical synthesis on chatbots powered by Large Language Model (LLM) and adolescent loneliness. The first author is a 16-year-old Chinese student who recently migrated to the UK. She wrote the first draft of this paper from her lived experience, supervised by the second author. Rather than treating the youth perspective as one data point among many, we foreground it as the primary interpretive lens, grounded in interdisciplinary literature from social computing, developmental psychology, and Human-Computer Interaction (HCI). We examine how chatbots shape experiences of loneliness differently across adolescent subgroups, including those with anxiety or depression, neurodivergent youth, and immigrant adolescents, and identify both conditions under which they may temporarily reduce isolation and breakdowns that risk deepening it. We derive three population-sensitive design implications. The next phase of this work will expand the youth authorship model to a panel of adolescents across these subgroups, empirically validating the framework presented here.

Interactive artificial intelligence (AI) has rapidly emerged as a key field within both the human-computer interaction (HCI) and AI communities, driven by the growth of human-centered and responsible AI over the past few years. Unlike traditional AI approaches that prioritize algorithms, interactive AI emphasizes real-time manipulation, human-centered design, and transparency control in data and algorithms, with the overarching goal of enhancing human benefit. Simultaneously, AI-driven and embodied robotic systems are becoming increasingly integrated into daily life – homes, workplaces, public spaces, and healthcare – where human interaction is central to their success and acceptance. This makes it crucial to design robotic systems that are not only technically capable, but also understandable, trustworthy, and responsive to people’s needs, capabilities, and values. In light of this, we present the third edition of this InterAI workshop 1 – Interactive AI for Human-Centered Robotics – to the CHI community, aiming to gain deeper insights of this emerging area through a half-day, in-person event. Our objective is to explore the current research landscape, identify challenges, and articulate future research directions for integrating interactive AI into human-centered robotic systems.

Recent studies have revealed the key importance of modelling personality in robots to improve interaction quality by empowering them with social-intelligence capabilities. Most research relies on verbal and non-verbal features related to personality traits that are highly context-dependent. Hence, analysing how humans behave in a given context is crucial to evaluate which of those social cues are effective. For this purpose, we designed an assistive memory game, in which participants were asked to play the game obtaining support from an introvert or extroverted helper, whether from a human or robot. In this context, we aim to (i) explore whether selective verbal and non-verbal social cues related to personality can be modelled in a robot, (ii) evaluate the efficiency of a statistical decision-making algorithm employed by the robot to provide adaptive assistance, and (iii) assess the validity of the similarity attraction principle. Specifically, we conducted two user studies. In the human-human study (N=31), we explored the effects of helper's personality on participants' performance and extracted distinctive verbal and non-verbal social cues from the human helper. In the human-robot study (N=24), we modelled the extracted social cues in the robot and evaluated its effectiveness on participants' performance. Our findings showed that participants were able to distinguish between robots' personalities, and not between the level of autonomy of the robot (Wizard-of-Oz vs fully autonomous). Finally, we found that participants achieved better performance with a robot helper that had a similar personality to them, or a human helper that had a different personality.

Human decision-making behaviors in social contexts are largely driven by fairness considerations. The dual-process model suggests that in addition to cognitive processes, emotion contributes to economic decision-making. Although humor, as an effective emotional regulation strategy to induce positive emotion, may influence an individual's emotional state and decision-making behavior, previous studies have not examined how humor modulates fairness-related responses in the gain and loss contexts simultaneously. This study uses the Ultimatum Game (UG) in gain and loss contexts to explore this issue. The results show, in the gain context, viewing humorous pictures compared to humorless pictures increased acceptance rates and this effect was moderated by the offer size. However, we did not find the same effect in the loss context. These findings indicate that humor's affection for fairness considerations may depend on the context and provide insight into the finite power of humor in human sociality, cooperation and norm compliance.

Previous research on scanpath prediction has mainly focused on group models, disregarding the fact that the scanpaths and attentional behaviors of individuals are diverse. The disregard of these differences is especially detrimental to social human-robot interaction, whereby robots commonly emulate human gaze based on heuristics or predefined patterns. However, human gaze patterns are heterogeneous and varying behaviors can significantly affect the outcomes of such human-robot interactions. To fill this gap, we developed a deep learning-based social cue integration model for saliency prediction to instead predict scanpaths in videos. Our model learned scanpaths by recursively integrating fixation history and social cues through a gating mechanism and sequential attention. We evaluated our approach on gaze datasets of dynamic social scenes, observed under the free-viewing condition. The introduction of fixation history into our models makes it possible to train a single unified model rather than the resource-intensive approach of training individual models for each set of scanpaths. We observed that the late neural integration approach surpasses early fusion when training models on a large dataset, in comparison to a smaller dataset with a similar distribution. Results also indicate that a single unified model, trained on all the observers' scanpaths, performs on par or better than individually trained models. We hypothesize that this outcome is a result of the group saliency representations instilling universal attention in the model, while the supervisory signal and fixation history guide it to learn personalized attentional behaviors, providing the unified model a benefit over individual models due to its implicit representation of universal attention.

The efficient integration of multisensory observations is a key property of the brain that yields the robust interaction with the environment. However, artificial multisensory perception remains an open issue especially in situations of sensory uncertainty and conflicts. In this work, we extend previous studies on audio-visual (AV) conflict resolution in complex environments. In particular, we focus on quantitatively assessing the contribution of semantic congruency during an AV spatial localization task. In addition to conflicts in the spatial domain (i.e. spatially misaligned stimuli), we consider gender-specific conflicts with male and female avatars. Our results suggest that while semantically related stimuli affect the magnitude of the visual bias (perceptually shifting the location of the sound towards a semantically congruent visual cue), humans still strongly rely on environmental statistics to solve AV conflicts. Together with previously reported results, this work contributes to a better understanding of how multisensory integration and conflict resolution can be modelled in artificial agents and robots operating in real-world environments.

Sensory and emotional experiences are essential for mental and physical well-being, especially within the realm of psychiatry. This article highlights recent advances in cognitive neuroscience, emphasizing the significance of pain recognition and empathic artificial intelligence (AI) in healthcare. We provide an overview of the recent development process in computational pain recognition and cognitive neuroscience regarding the mechanisms of pain and empathy. Through a comprehensive discussion, the article delves into critical questions such as the methodologies for AI in recognizing pain from diverse sources of information, the necessity for AI to exhibit empathic responses, and the associated advantages and obstacles linked with the development of empathic AI. Moreover, insights into the prospects and challenges are emphasized in relation to fostering artificial empathy. By delineating potential pathways for future research, the article aims to contribute to developing effective assistants equipped with empathic capabilities, thereby introducing safe and meaningful interactions between humans and AI, particularly in the context of mental health and psychiatry. [Display omitted] Applied sciences; Cognitive neuroscience; Social sciences

Selective attention plays an essential role in information acquisition and utilization from the environment. In the past 50 years, research on selective attention has been a central topic in cognitive science. Compared with unimodal studies, crossmodal studies are more complex but necessary to solve real-world challenges in both human experiments and computational modeling. Although an increasing number of findings on crossmodal selective attention have shed light on humans' behavioral patterns and neural underpinnings, a much better understanding is still necessary to yield the same benefit for intelligent computational agents. This article reviews studies of selective attention in unimodal visual and auditory and crossmodal audiovisual setups from the multidisciplinary perspectives of psychology and cognitive neuroscience, and evaluates different ways to simulate analogous mechanisms in computational models and robotics. We discuss the gaps between these fields in this interdisciplinary review and provide insights about how to use psychological findings and theories in artificial intelligence from different perspectives.

As voice assistants (VAs) become increasingly integrated into daily life, the need for emotion-aware systems that can recognize and respond appropriately to user emotions has grown. While significant progress has been made in speech emotion recognition (SER) and sentiment analysis, effectively addressing user emotions-particularly negative ones-remains a challenge. This study explores human emotional response strategies in VA interactions using a role-swapping approach, where participants regulate AI emotions rather than receiving pre-programmed responses. Through speech feature analysis and natural language processing (NLP), we examined acoustic and linguistic patterns across various emotional scenarios. Results show that participants favor neutral or positive emotional responses when engaging with negative emotional cues, highlighting a natural tendency toward emotional regulation and de-escalation. Key acoustic indicators such as root mean square (RMS), zero-crossing rate (ZCR), and jitter were identified as sensitive to emotional states, while sentiment polarity and lexical diversity (TTR) distinguished between positive and negative responses. These findings provide valuable insights for developing adaptive, context-aware VAs capable of delivering empathetic, culturally sensitive, and user-aligned responses. By understanding how humans naturally regulate emotions in AI interactions, this research contributes to the design of more intuitive and emotionally intelligent voice assistants, enhancing user trust and engagement in human-AI interactions.

Conversational agents (CAs) are increasingly embedded in daily life, yet their ability to navigate user emotions efficiently is still evolving. This study investigates how users with varying traits – gender, personality, and cultural background – adapt their interaction strategies with emotion-aware CAs in specific emotional scenarios. Using an emotion-aware CA prototype expressing five distinct emotions (neutral, happy, sad, angry, and fear) through male and female voices, we examine how interaction dynamics shift across different voices and emotional contexts through empirical studies. Our findings reveal distinct variations in user engagement and conversational strategies based on individual traits, emphasizing the value of personalized, emotion-sensitive interactions. By analyzing both qualitative and quantitative data, we demonstrate that tailoring CAs to user characteristics can enhance user satisfaction and interaction quality. This work underscores the critical need for ongoing research to design CAs that not only recognize but also adaptively respond to emotional needs, ultimately supporting a diverse user groups more effectively.

Crossmodal conflict resolution is crucial for robot sensorimotor coupling through the interaction with the environment, yielding swift and robust behaviour also in noisy conditions. In this paper, we propose a neurorobotic experiment in which an iCub robot exhibits human-like responses in a complex crossmodal environment. To better understand how humans deal with multisensory conflicts, we conducted a behavioural study exposing 33 subjects to congruent and incongruent dynamic audio-visual cues. In contrast to previous studies using simplified stimuli, we designed a scenario with four animated avatars and observed that the magnitude and extension of the visual bias are related to the semantics embedded in the scene, i.e., visual cues that are congruent with environmental statistics (moving lips and vocalization) induce the strongest bias. We implement a deep learning model that processes stereophonic sound, facial features, and body motion to trigger a discrete behavioural response. After training the model, we exposed the iCub to the same experimental conditions as the human subjects, showing that the robot can replicate similar responses in real time. Our interdisciplinary work provides important insights into how crossmodal conflict resolution can be modelled in robots and introduces future research directions for the efficient combination of sensory observations with internally generated knowledge and expectations.

Human eye gaze plays an important role in delivering information, communicating intent, and understanding others' mental states. Previous research shows that a robot's gaze can also affect humans' decision-making and strategy during an interaction. However, limited studies have trained humanoid robots on gaze-based data in human-robot interaction scenarios. Considering gaze impacts the naturalness of social exchanges and alters the decision process of an observer, it should be regarded as a crucial component in human-robot interaction. To investigate the impact of robot gaze on humans, we propose an embodied neural model for performing human-like gaze shifts. This is achieved by extending a social attention model and training it on eye-tracking data, collected by watching humans playing a game. We will compare human behavioral performances in the presence of a robot adopting different gaze strategies in a human-human cooperation game.

Robot facial expressions and gaze are important factors for enhancing human-robot interaction (HRI), but their effects on human collaboration and perception are not well understood, for instance, in collaborative game scenarios. In this study, we designed a collaborative triadic HRI game scenario where two participants worked together to insert objects into a shape sorter. One participant assumed the role of a guide. The guide instructed the other participant, who played the role of an actor, to place occluded objects into the sorter. A humanoid robot issued instructions, observed the interaction, and displayed social cues to elicit changes in the two participants' behavior. We measured human collaboration as a function of task completion time and the participants' perceptions of the robot by rating its behavior as intelligent or random. Participants also evaluated the robot by filling out the Godspeed questionnaire. We found that human collaboration was higher when the robot displayed a happy facial expression at the beginning of the game compared to a neutral facial expression. We also found that participants perceived the robot as more intelligent when it displayed a positive facial expression at the end of the game. The robot's behavior was also perceived as intelligent when directing its gaze toward the guide at the beginning of the interaction, not the actor. These findings provide insights into how robot facial expressions and gaze influence human behavior and perception in collaboration.

Caring for individuals living with dementia can be a challenging and emotionally taxing experience, especially for caregivers who are often spouses or partners. Many caregivers lack prior experience in providing care and would greatly benefit from training and support. With the advancements in AI techniques, human-centered AI interaction approaches have shown promise in enhancing dementia care. As the 1st edition, this workshop focuses on the intersection of human-computer interaction (HCI) and artificial intelligence (AI) to address the unique challenges associated with dementia care. Dementia presents multifaceted cognitive and emotional hurdles, and the workshop aims to explore how AI technologies can improve the quality of life for individuals with dementia while also supporting their caregivers. By bringing together researchers, practitioners, caregivers, and stakeholders, the workshop seeks to foster collaboration and innovation in the design, development, and implementation of human-centered AI solutions for dementia care.

To enhance human-robot social interaction, it is essential for robots to process multiple social cues in a complex real-world environment. However, incongruency of input information across modalities is inevitable and could be challenging for robots to process. To tackle this challenge, our study adopted the neurorobotic paradigm of crossmodal conflict resolution to make a robot express human-like social attention. A behavioural experiment was conducted on 37 participants for the human study. We designed a round-table meeting scenario with three animated avatars to improve ecological validity. Each avatar wore a medical mask to obscure the facial cues of the nose, mouth, and jaw. The central avatar shifted its eye gaze while the peripheral avatars generated sound. Gaze direction and sound locations were either spatially congruent or incongruent. We observed that the central avatar's dynamic gaze could trigger crossmodal social attention responses. In particular, human performance was better under the congruent audio-visual condition than the incongruent condition. Our saliency prediction model was trained to detect social cues, predict audio-visual saliency, and attend selectively for the robot study. After mounting the trained model on the iCub, the robot was exposed to laboratory conditions similar to the human experiment. While the human performance was overall superior, our trained model demonstrated that it could replicate attention responses similar to humans.

In real life, people perceive nonexistent faces from face-like objects, called face pareidolia. Face-like objects, similar to averted gazes, can direct the observer's attention. However, the similarities and differences in attentional shifts induced by these two types of stimuli remain underexplored. Through a gaze cueing task, this study compares the cueing effects of face-like objects and averted gaze faces, revealing both commonalities and distinct underlying mechanisms. Our findings demonstrate that while both types of stimuli can elicit attentional shifts, the mechanisms differ: averted gaze faces rely on processing local features like gaze direction, whereas face-like objects leverage their global configuration to enhance attentional shifts by triggered eye-like features. These findings advance the understanding of the processing mechanisms underlying the perception of face-like objects, and how the brain represents facial attributes even when physical facial stimuli are absent. This study provides a valuable theoretical foundation for future investigations into the broader applications of face-like stimuli in human perception and attention.

Under resource distribution context, individuals have a strong aversion to unfair treatment not only toward themselves but also toward others. However, there is no clear consensus regarding the commonality and distinction between these two types of unfairness. Moreover, many neuroimaging studies have investigated how people evaluate and respond to unfairness in the abovementioned two contexts, but the consistency of the results remains to be investigated. To resolve these two issues, we sought to summarize existing findings regarding unfairness to self and others and to further elucidate the neural underpinnings related to distinguishing evaluation and response processes through meta-analyses of previous neuroimaging studies. Our results indicated that both types of unfairness consistently activate the affective and conflict-related anterior insula (AI) and dorsal anterior cingulate cortex/supplementary motor area (dACC/SMA), but the activations related to unfairness to self appeared stronger than those related to others, suggesting that individuals had negative reactions to both unfairness and a greater aversive response toward unfairness to self. During the evaluation process, unfairness to self activated the bilateral AI, dACC, and right dorsolateral prefrontal cortex (DLPFC), regions associated with unfairness aversion, conflict, and cognitive control, indicating reactive, emotional and automatic responses. In contrast, unfairness to others activated areas associated with theory of mind, the inferior parietal lobule and temporoparietal junction (IPL-TPJ), suggesting that making rational judgments from the perspective of others was needed. During the response, unfairness to self activated the affective-related left AI and striatum, whereas unfairness to others activated cognitive control areas, the left DLPFC and the thalamus. This indicated that the former maintained the traits of automaticity and emotionality, whereas the latter necessitated cognitive control. These findings provide a fine-grained description of the common and distinct neurocognitive mechanisms underlying unfairness to self and unfairness to others. Overall, this study not only validates the inequity aversion model but also provides direct evidence of neural mechanisms for neurobiological models of fairness.

Conversational agents (CAs) increasingly detect users’ emotions, yet deciding how to respond, especially to negative affect, remains a central design challenge. We conducted a role-switching study in which participants reply as the CAs to simulated users expressing anger, sadness, or fear. Results reveal systematic, gender-linked patterns: most male participants favored a neutral, affect-balanced stance and prioritized clarification or task progress, whereas most female participants produced a wider range of non-neutral responses, more often using explicit empathy, reassurance, and reflective listening. We also observe differences in de-escalation phrasing, validation timing, and follow-up questioning across scenarios. These findings indicate that strategies for handling negative emotions vary with user characteristics and context. Based on these findings, we argue for adaptive CA response policies that calibrate first-turn acknowledgment and information-gathering, tailoring prosody and wording to emotional context in order to support de-escalation, perceived understanding, and user trust.

Mirroring non-verbal social cues such as affect or movement can enhance human-human and human-robot interactions in the real world. The robotic platforms and control methods also impact people's perception of human-robot interaction. However, limited studies have compared robot imitation across different platforms and control methods. Our research addresses this gap by conducting two experiments comparing people's perception of affective mirroring between the iCub and Pepper robots and movement mirroring between vision-based iCub control and Inertial Measurement Unit (IMU)-based iCub control. We discovered that the iCub robot was perceived as more humanlike than the Pepper robot when mirroring affect. A vision-based controlled iCub outperformed the IMU-based controlled one in the movement mirroring task. Our findings suggest that different robotic platforms impact people's perception of robots' mirroring during HRI. The control method also contributes to the robot's mirroring performance. Our work sheds light on the design and application of different humanoid robots in the real world.

With the increasing performance of text-to-speech systems and their generated voices indistinguishable from natural human speech, the use of these systems for robots raises ethical and safety concerns. A robot with a natural voice could increase trust, which might result in over-reliance despite evidence for robot unreliability. To estimate the influence of a robot’s voice on trust and compliance, we design a study that consists of two experiments. In a pre-study ( \(N_{1}=60\) ) the most suitable natural and mechanical voice for the main study are estimated and selected for the main study. Afterward, in the main study ( \(N_{2}=68\) ), the influence of a robot’s voice on trust and compliance is evaluated in a cooperative game of Battleship with a robot as an assistant. During the experiment, the acceptance of the robot’s advice and response time are measured, which indicate trust and compliance, respectively. The results show that participants expect robots to sound human-like and that a robot with a natural voice is perceived as safer. Additionally, a natural voice can affect compliance. Despite repeated incorrect advice, the participants are more likely to rely on the robot with the natural voice. The results do not show a direct effect on trust. Natural voices provide increased intelligibility, and while they can increase compliance with the robot, the results indicate that natural voices might not lead to over-reliance. The results highlight the importance of incorporating voices into the design of social robots to improve communication, avoid adverse effects, and increase acceptance and adoption in society.

Scaling social robot studies is constrained due to the need for human interaction, making large participant recruitment impractical. Robotics simulators help mitigate this limitation but generally lack the realism to accurately simulate social cues. We introduce a cognitive robotic simulation scheme to evaluate social attention models in physical environments. By projecting ground-truth priority maps to a simulated environment, we can directly compare predicted maps using common saliency metrics. Using the iCub robot, we assess a dynamic scanpath model that predicts attention targets, simulating human scanpaths. Evaluations with the FindWho and MVVA datasets show strong correlations between robotcaptured metrics and direct-streamed video metrics. Our results indicate robustness of the social attention model to noise and real-world conditions, suggesting its practical usability for predicting personalized scanpaths in real settings. This approach reduces the need for extensive human-robot interaction studies in the early stages of study design, enabling the scalability and reproducibility of social robot evaluations.

The COVID-19 pandemic has plunged the world into a crisis. To contain this crisis, it is essential to build full cooperation between the government and the public. However, it is unclear which governmental and individual factors are determinants and how they interact with protective behaviors against COVID-19. To resolve this issue, this study builds a multiple mediation model. Findings show that government emergency public information such as detailed pandemic information and positive risk communication had greater impact on protective behaviors than rumor refutation and supplies. Moreover, governmental factors may indirectly affect protective behaviors through individual factors such as perceived efficacy, positive emotions, and risk perception. These findings suggest that systematic intervention programs for governmental factors need to be integrated with individual factors to achieve effective prevention and control of COVID-19 among the public.

Additional publications

My full publication record: https://scholar.google.com/citations?user=AUqLvaIAAAAJ&hl=en