Dr Sara Atito Ali Ahmed

Academic and research departments

Centre for Vision, Speech and Signal Processing (CVSSP), Faculty of Engineering and Physical Sciences.About

Biography

Sara Ahmed holds a joint position at the University of Surrey between the Centre for Vision, Speech and Signal Processing (CVSSP) and the Institute of People-Centred Artificial Intelligence. Prior to her current role, Sara completed her PhD in computer science at Sabanci University, where she specialised in deep learning ensembles for image understanding. Currently, her primary focus is on advancing the field of self-supervised learning for vision. Her innovative work in this area has led to her recognition as a Surrey Future Fellow - a prestigious recognition of her outstanding early career achievements. Additionally, her interdisciplinary work and commitment to addressing global issues have earned her a fellowship at the Institute for Sustainability, underscoring her impact in both computer science and broader real-world challenges.

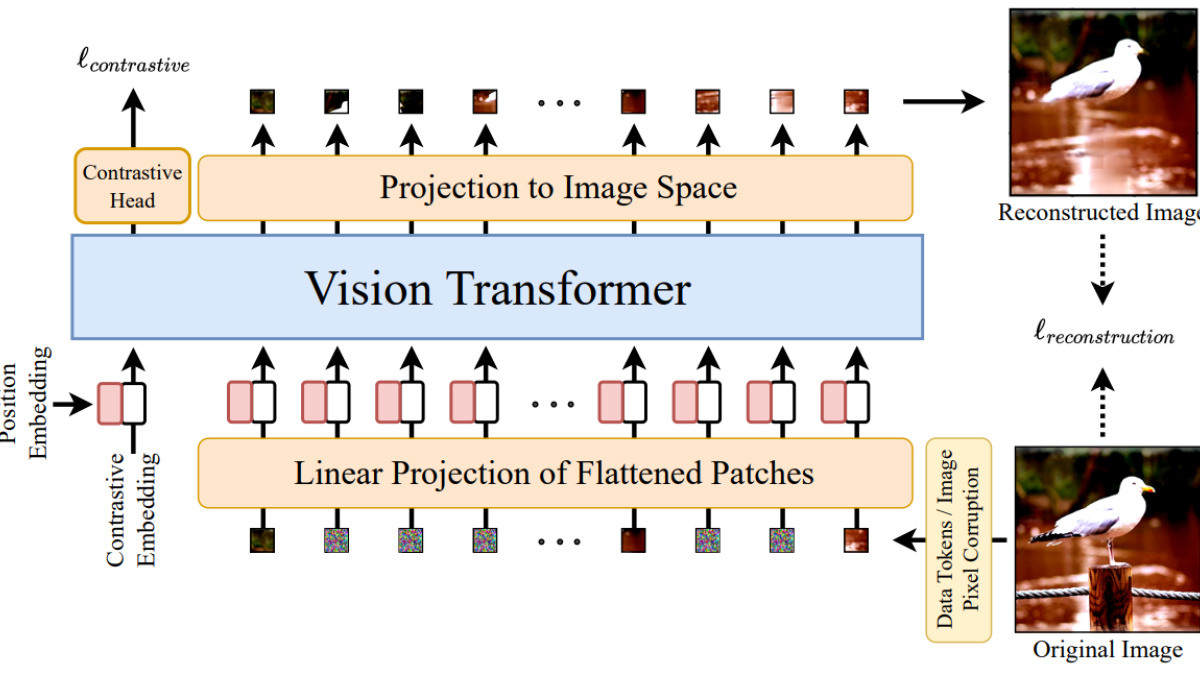

SiT: Self-supervised Vision Transformer

Sustainable development goals

My research interests are related to the following:

Publications

Foundation models like CLIP and ALIGN have transformed few-shot and zero-shot vision applications by fusing visual and textual data, yet the integrative few-shot classification and segmentation (FS-CS) task primarily leverages visual cues, overlooking the potential of textual support. In FS-CS scenarios, ambiguous object boundaries and overlapping classes often hinder model performance, as limited visual data struggles to fully capture high-level semantics. To bridge this gap, we present a novel multi-modal FS-CS framework that integrates textual cues into support data, facilitating enhanced semantic disambiguation and fine-grained segmentation. Our approach first investigates the unique contributions of exclusive text-based support, using only class labels to achieve FS-CS. This strategy alone achieves performance competitive with vision-only methods on FS-CS tasks, underscoring the power of textual cues in few-shot learning. Building on this, we introduce a dual-modal prediction mechanism that synthesizes insights from both textual and visual support sets, yielding robust multi-modal predictions. This integration significantly elevates FS-CS performance, with classification and segmentation improvements of +3.7/6.6% (1-way 1-shot) and +8.0/6.5% (2-way 1-shot) on COCO-20^i, and +2.2/3.8% (1-way 1-shot) and +4.3/4.0% (2-way 1-shot) on Pascal-5^i. Additionally, in weakly supervised FS-CS settings, our method surpasses visual-only benchmarks using textual support exclusively, further enhanced by our dual-modal predictions. By rethinking the role of text in FS-CS, our work establishes new benchmarks for multi-modal few-shot learning and demonstrates the efficacy of textual cues for improving model generalization and segmentation accuracy.

The emergence of vision-language foundation models has enabled the integration of textual information into vision-based applications. However, in few-shot classification and segmentation (FS-CS), this potential remains underutilised. Commonly, self-supervised vision models have been employed, particularly in weakly-supervised scenarios, to generate pseudo-segmentation masks, as ground truth masks are typically unavailable and only target classification is provided. Despite their success, such models find it difficult to capture accurate semantics when compared to vision-language models. To address this limitation, we propose a novel FS-CS approach that leverages the rich semantic alignment of vision-language models to generate more precise pseudo ground-truth masks. While current vision-language models excel in global visual-text alignment, they struggle with finer, patch-level alignment, which is crucial for detailed segmentation tasks. To overcome this, we introduce a method that enhances patch-level alignment without requiring additional training. In addition, existing FS-CS frameworks typically lacks multi-scale information, limiting their ability to capture fine and coarse features simultaneously. To overcome this, we incorporate a module based on atrous convolutions to inject multi-scale information into the feature maps. Together, these contributions - text enhanced pseudo-mask generation and improved multi-scale feature representation - significantly boost the performance of our model in weakly-supervised settings, surpassing state-of-the-art methods and demonstrating the importance of integrating multi-modal information for robust FS-CS solutions.

Immunofluorescence (IF) images reveal detailed information about structures and functions at the subcellular level. However, unlike RGB images, IF datasets pose challenges for deep learning models due to their inconsistencies in channel count and configuration, stemming from varying staining protocols across laboratories and studies. Although existing approaches build channel-adaptive models for training , they do not perform evaluations across IF datasets with unseen channel configurations. To address this, we first introduce a biologically informed view of cellular image channels by grouping them into either context or concept, where we treat the context channels as a reference for the concept channels in the image. We leverage this view to propose Channel Conditioned Cell Representations (C3R), a framework that learns representations that transfers well to both in-distribution (ID) and out-of-distribution (OOD) datasets which contain same and different channel configurations , respectively. C3R is a twofold framework comprising a channel-adaptive encoder architecture and a masked knowledge distillation training strategy, both built around the context-concept principle. We find that C3R outperforms existing benchmarks on both ID and OOD tasks, while yielding state-of-the-art results on frozen encoder evaluation on the CHAMMI benchmark. Our method opens a new pathway for cross-dataset generalization between IF datasets, with no need for retraining on unseen channel configurations.

Advanced image fusion methods mostly prioritise high-level missions, where task interaction struggles with semantic gaps, requiring complex bridging mechanisms. In contrast, we propose to leverage low-level vision tasks from digital photography fusion, allowing for effective feature interaction through pixel-level supervision. This new paradigm provides strong guidance for unsupervised multimodal fusion without relying on abstract semantics, enhancing task-shared feature learning for broader applicability. Owning to the hybrid image features and enhanced universal representations, the proposed GIFNet supports diverse fusion tasks, achieving high performance across both seen and unseen scenarios with a single model. Uniquely, experimental results reveal that our framework also supports single-modality enhancement, offering superior flexibility for practical applications. Our code will be available at https://github.com/AWCXV/GIFNet.

Self-supervised pre-trained audio networks have seen widespread adoption in real-world systems, particularly in multi-modal large language models. These networks are often employed in a frozen state, under the assumption that the SSL pre-training has sufficiently equipped them to handle real-world audio. However, a critical question remains: how well do these models actually perform in real-world conditions, where audio is typically polyphonic and complex, involving multiple overlapping sound sources? Current audio SSL methods are often benchmarked on datasets predominantly featuring monophonic audio, such as environmental sounds, and speech. As a result, the ability of SSL models to generalize to polyphonic audio, a common characteristic in natural scenarios, remains underexplored. This limitation raises concerns about the practical robustness of SSL models in more realistic audio settings. To address this gap, we introduce Self-Supervised Learning from Audio Mixtures (SSLAM), a novel direction in audio SSL research, designed to improve, designed to improve the model's ability to learn from polyphonic data while maintaining strong performance on monophonic data. We thoroughly evaluate SSLAM on standard audio SSL benchmark datasets which are predominantly monophonic and conduct a comprehensive comparative analysis against SOTA methods using a range of high-quality, publicly available polyphonic datasets. SSLAM not only improves model performance on polyphonic audio, but also maintains or exceeds performance on standard audio SSL benchmarks. Notably, it achieves up to a 3.9\% improvement on the AudioSet-2M (AS-2M), reaching a mean average precision (mAP) of 50.2. For polyphonic datasets, SSLAM sets new SOTA in both linear evaluation and fine-tuning regimes with performance improvements of up to 9.1\% (mAP).

Contrastive learning has achieved great success in skeleton-based action recognition. However, most existing approaches encode the skeleton sequences as entangled spatiotemporal representations and confine the contrasts to the same level of representation. Instead, this paper introduces a novel contrastive learning framework, namely Spatiotemporal Clues Disentanglement Network (SCD-Net). Specifically, we integrate the decoupling module with a feature extractor to derive explicit clues from spatial and temporal domains respectively. As for the training of SCD-Net, with a constructed global anchor, we encourage the interaction between the anchor and extracted clues. Further, we propose a new masking strategy with structural constraints to strengthen the contextual associations, leveraging the latest development from masked image modelling into the proposed SCD-Net. We conduct extensive evaluations on the NTU-RGB+D (60&120) and PKUMMD (I&II) datasets, covering various downstream tasks such as action recognition, action retrieval, transfer learning, and semi-supervised learning. The experimental results demonstrate the effectiveness of our method, which outperforms the existing state-of-the-art (SOTA) approaches significantly. Our code and supplementary material can be found at https://github.com/cong-wu/SCD-Net.

Convolutional neural networks (CNNs) and Transformer-based networks have recently enjoyed significant attention for various audio classification and tagging tasks following their wide adoption in the computer vision domain. Despite the difference in information distribution between audio spectrograms and natural images, there has been limited exploration of effective information retrieval from spectrograms using domain-specific layers tailored for the audio domain. In this paper, we leverage the power of the Multi-Axis Vision Transformer (MaxViT) to create DTF-AT (Decoupled Time-Frequency Audio Transformer) that facilitates interactions across time, frequency, spatial, and channel dimensions. The proposed DTF-AT architecture is rigorously evaluated across diverse audio and speech classification tasks, consistently establishing new benchmarks for state-of-the-art (SOTA) performance. Notably, on the challenging AudioSet 2M classification task, our approach demonstrates a substantial improvement of 4.4% when the model is trained from scratch and 3.2% when the model is initialised from ImageNet-1K pre-trained weights. In addition, we present comprehensive ablation studies to investigate the impact and efficacy of our proposed approach. The codebase and pretrained weights are available on https://github.com/ta012/DTFAT.git

Recently, there has been a growing interest in RGB-D object tracking thanks to its promising performance achieved by combining visual information with auxiliary depth cues. However, the limited volume of annotated RGB-D tracking data for offline training has hindered the development of a dedicated end-to-end RGB-D tracker design. Consequently, the current state-of-the-art RGB-D trackers mainly rely on the visual branch to support the appearance modelling, with the depth map utilised for elementary information fusion or failure reasoning of online tracking. Despite the achieved progress, the current paradigms for RGB-D tracking have not fully harnessed the inherent potential of depth information, nor fully exploited the synergy of vision-depth information. Considering the availability of ample unlabelled RGB-D data and the advancement in self-supervised learning, we address the problem of self-supervised learning for RGB-D object tracking. Specifically, an RGB-D backbone network is trained on unlabelled RGB-D datasets using masked image modelling. To train the network, the masking mechanism creates a selective occlusion of the input visible image to force the corresponding aligned depth map to help with discerning and learning vision-depth cues for the reconstruction of the masked visible image. As a result, the pre-trained backbone network is capable of cooperating with crucial visual and depth features of the diverse objects and background in the RGB-D image. The intermediate RGB-D features output by the pre-trained network can effectively be used for object tracking. We thus embed the pre-trained RGB-D network into a transformer-based tracking framework for stable tracking. Comprehensive experiments and the analysis of the results obtained on several RGB-D tracking datasets demonstrate the effectiveness and superiority of the proposed RGB-D self-supervised learning framework and the following tracking approach. •A novel RGB-D backbone network based on self-supervised learning.•Joint extraction of RGB-D feature representation for object localisation.•A Transformer-based tracking method for RGB-D object tracking.•Extensive experiments and analyses on four RGB-D tracking benchmarks.

Compositional actions consist of dynamic (verbs) and static (objects) concepts. Humans can easily recognize unseen compositions using the learned concepts. For machines, solving such a problem requires a model to recognize unseen actions composed of previously observed verbs and objects, thus requiring so-called compositional generalization ability. To facilitate this research, we propose a novel Zero-Shot Compositional Action Recognition (ZS-CAR) task. For evaluating the task, we construct a new benchmark, Something-composition (Sth-com), based on the widely used Something-Something V2 dataset. We also propose a novel Component-to-Composition (C2C) learning method to solve the new ZS-CAR task. C2C includes an independent component learning module and a composition inference module. Last, we devise an enhanced training strategy to address the challenges of component variations between seen and unseen compositions and to handle the subtle balance between learning seen and unseen actions. The experimental results demonstrate that the proposed framework significantly surpasses the existing compositional generalization methods and sets a new state-of-the-art. The new Sth-com benchmark and code are available at https://github.com/RongchangLi/ZSCAR C2C.

Contrastive learning has achieved great success in skeleton-based action recognition. However, most existing approaches encode the skeleton sequences as entangled spatiotemporal representations and confine the contrasts to the same level of representation. Instead, this paper introduces a novel contrastive learning framework, namely Spatiotemporal Clues Disentanglement Network (SCD-Net). Specifically, we integrate the decoupling module with a feature extractor to derive explicit clues from spatial and temporal domains respectively. As for the training of SCD-Net, with a constructed global anchor, we encourage the interaction between the anchor and extracted clues. Further, we propose a new masking strategy with structural constraints to strengthen the contextual associations, leveraging the latest development from masked image modelling into the proposed SCD-Net. We conduct extensive evaluations on the NTU-RGB+D (60&120) and PKU-MMD (I&II) datasets, covering various downstream tasks such as action recognition, action retrieval, transfer learning, and semi-supervised learning. The experimental results demonstrate the effectiveness of our method, which outperforms the existing state-of-the-art (SOTA) approaches significantly.

Self-supervised pretraining (SSP) has emerged as a popular technique in machine learning, enabling the extraction of meaningful feature representations without labelled data. In the realm of computer vision, pretrained vision transformers (ViTs) have played a pivotal role in advancing transfer learning. Nonetheless, the escalating cost of finetuning these large models has posed a challenge due to the explosion of model size. This study endeavours to evaluate the effectiveness of pure self-supervised learning (SSL) techniques in computer vision tasks, obviating the need for finetuning, with the intention of emulating human-like capabilities in generalisation and recognition of unseen objects. To this end, we propose an evaluation protocol for zero-shot segmentation based on a prompting patch. Given a point on the target object as a prompt, the algorithm calculates the similarity map between the selected patch and other patches, upon that, a simple thresholding is applied to segment the target. Another evaluation is intra-object and inter-object similarity to gauge discriminatory ability of SSP ViTs. Insights from zero-shot segmentation from prompting and discriminatory abilities of SSP led to the design of a simple SSP approach, termed MMC. This approaches combines Masked image modelling for encouraging similarity of local features, Momentum based self-distillation for transferring semantics from global to local features, and global Contrast for promoting semantics of global features, to enhance discriminative representations of SSP ViTs. Consequently, our proposed method significantly reduces the overlap of intra-object and inter-object similarities, thereby facilitating effective object segmentation within an image. Our experiments reveal that MMC delivers top-tier results in zero-shot semantic segmentation across various datasets.

Transformers, which were originally developed for natural language processing, have recently generated significant interest in the computer vision and audio communities due to their flexibility in learning long-range relationships. Constrained by the data hungry nature of transformers and the limited amount of labelled data, most transformer-based models for audio tasks are finetuned from ImageNet pretrained models, despite the huge gap between the domain of natural images and audio. This has motivated the research in self-supervised pretraining of audio transformers, which reduces the dependency on large amounts of labeled data and focuses on extracting concise representations of audio spectrograms. In this paper, we propose L ocal- G lobal A udio S pectrogram v I sion T ransformer, namely ASiT, a novel self-supervised learning framework that captures local and global contextual information by employing group masked model learning and self-distillation. We evaluate our pretrained models on both audio and speech classification tasks, including audio event classification, keyword spotting, and speaker identification. We further conduct comprehensive ablation studies, including evaluations of different pretraining strategies. The proposed ASiT framework significantly boosts the performance on all tasks and sets a new state-of-the-art performance in five audio and speech classification tasks, outperforming recent methods, including the approaches that use additional datasets for pretraining.

Recently, masked image modeling (MIM), an important self-supervised learning (SSL) method, has drawn attention for its effectiveness in learning data representation from unlabeled data. Numerous studies underscore the advantages of MIM, highlighting how models pretrained on extensive datasets can enhance the performance of downstream tasks. However, the high computational demands of pretraining pose significant challenges, particularly within academic environments, thereby impeding the SSL research progress. In this study, we propose efficient training recipes for MIM based SSL that focuses on mitigating data loading bottlenecks and employing progressive training techniques and other tricks to closely maintain pretraining performance. Our library enables the training of a MAE-Base/16 model on the ImageNet 1K dataset for 800 epochs within just 18 hours, using a single machine equipped with 8 A100 GPUs. By achieving speed gains of up to 5.8 times, this work not only demonstrates the feasibility of conducting high-efficiency SSL training but also paves the way for broader accessibility and promotes advancement in SSL research particularly for prototyping and initial testing of SSL ideas.

Deep neural networks have enhanced the performance of decision making systems in many applications, including image understanding, and further gains can be achieved by constructing ensembles. However, designing an ensemble of deep networks is often not very beneficial since the time needed to train the networks is generally very high or the performance gain obtained is not very significant. In this paper, we analyse an error correcting output coding (ECOC) framework for constructing ensembles of deep networks and propose different design strategies to address the accuracy-complexity trade-off. We carry out an extensive comparative study between the introduced ECOC designs and the state-of-the-art ensemble techniques such as ensemble averaging and gradient boosting decision trees. Furthermore, we propose a fusion technique, that is shown to achieve the highest classification performance.