Dr Joaquin M. Prada

About

Biography

I am a mathematical modeller, with a background in industrial engineering, working in epidemiology to inform public health interventions. My research is highly multidisciplinary, combining mathematics, statistics, computer science, biology, economics, and social sciences. My primary focus is to inform surveillance, control, and elimination strategies for infectious diseases, particularly zoonosis and Neglected Tropical Diseases (NTDs). Across these diseases, I have led the development of stochastic population- and individual-based mathematical models of disease transmission, using Bayesian statistical approaches for their calibration and exploring the use of machine-learning approaches and optimization algorithms, and integration with other disciplines. This has led to very fruitful international collaborations across Europe, Latin America, Sub-Saharan Africa, South-East Asia, and the US.

I have served in various funding panels (e.g. UKRI, Wellcome Trust) and international stakeholder working groups, and I chaired the United Against Rabies Forum (a WHO-WOAH-FAO initiative) “tool evaluation” workstream. I currently hold a visiting research position at North Carolina State University and held a similar position at the University of Warwick and Oxford University.

As faculty, I have mentored undergraduate and postgraduate tutees, several undergraduate research projects and lead a research group (@Pradalab on Twitter/X), funded by national and international agencies. Some of the group interests are:

Sustainable developments for Neglected Communities

My research group has developed mathematical models across a range of diseases to directly address World Health Organisation priority questions. We have collaborated extensively internationally, collating results from different mathematical models, to address key policy questions, such as the impact of COVID-19 on NTD programmes. We integrate our mathematical models with economic analysis, accounting for social and environmental drivers. Many of the diseases we tackle have a zoonotic component, thus a One Health approach, recognising the interconnection between humans, animals, and their shared environment, is needed. Outputs from our work directly influence policy in the development of guidelines for public and animal health interventions.

At Surrey, I was co-lead of the “Sustainable developments for Neglected Communities” programme of the Institute for Sustainability.

Improving the evaluation of diagnostics

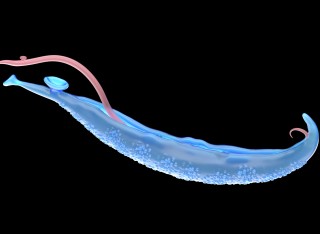

One challenge of many parasitic diseases, such as Schistosomiasis or Echinococcosis, is the absence of a “gold standard” diagnostic that can be readily used. In my research group, we have led the development of Hidden Markov Models (Latent Class Analysis) for estimating infection status in a community and compare different diagnostics. It builds on methodology I developed over the years, which we have used across multiple parasitic diseases. One outcome of this work is the identification of thresholds that can be used by control and elimination programmes.

Strategies to control livestock diseases

Engaging with partners both in the UK, such as the Pirbright Institute, and internationally, such as North Carolina State University, we have developed statistical and mathematical models to support guidance to farmers and government bodies. In particular, We have worked in Foot and Mouth Disease in Cattle, Respiratory virus in Pigs (such as Porcine Reproductive and Respiratory Syndrome virus, Porcine Epidemic Diarrhoea virus and African Swine Fever virus), and Nematodes in sheep. The outcomes of this work help reduce the impact these diseases have in the industry, while also optimising the use of drugs and vaccines.

University roles and responsibilities

- Co-director Surrey Institute for People-Centred Artificial Intelligence

- Lead PradaLab

Sustainable development goals

My research interests are related to the following:

Publications

PurposeSchistosomiasis (SCH) japonica remains a persistent public health concern in the Philippines despite continuing control efforts. This study aims to examine the transmission dynamics of SCH japonica and evaluate different intervention strategies using a One Health modeling approach, with the goal of supporting feasible control and elimination targets.MethodsWe developed a compartmental mathematical model calibrated using field survey data collected in 2022 from eight endemic barangays in Agusan del Sur and Surigao del Norte. The dataset included SCH prevalence, egg excretion levels in humans and animals quantified through Kato-Katz, modified McMaster, and sedimentation techniques, and household distance to potential transmission sites. Multiple intervention strategies were examined, including human and animal chemotherapy, WaSH (water access, sanitation, and hygiene) adoption, pasture prohibition, vegetation clearing, and snail control. Sensitivity analysis using Partial Rank Correlation Coefficients (PRCC) was performed to identify influential transmission drivers.ResultsThe model estimates baseline prevalence at approximately 20% in humans across the study areas. Under medium WaSH adoption, human prevalence is projected to decline to approximately 1.01% by 2030, whereas high WaSH coverage further reduces prevalence to 0.64%. Combining WaSH and pasture prohibition alongside chemotherapy is projected to reduce human prevalence to 0.09% and animal prevalence to 0.10% by 2030. Sensitivity analysis identified snail-to-human transmission rate (PRCC = 0.612) and snail shedding rate (PRCC = 0.607) as the most influential parameters.ConclusionIntegrated strategies focusing on WaSH, reduced animal exposure, and targeted chemotherapy offer the most effective pathway toward achieving World Health Organization's (WHO's) 2030 SCH targets. Implementation should be strengthened through health education, behavioral interventions, mechanization support, and active Local Government Unit (LGU) participation.

Neglected tropical diseases (NTDs) disproportionately affect the poorest populations. To meet the 2030 World Health Organization (WHO) roadmap targets, integrated and cross-cutting approaches are recommended to streamline programmatic operations across NTDs. Integration is not a new concept. But given recent policy shifts, disruptions to programmes and funding constraints, it is increasingly important. We consider integration as opportunities for coordination or collaboration across NTDs. Research is needed to identify the criteria and requirements necessary for successful programme integration, assess barriers, and determine the relative importance of each criterion to identify potential disease pairings. We applied Multi-Criteria Decision Analysis (MCDA) methodologies to gather expert and stakeholder insights on integrated control programmes for NTDs. During a facilitated workshop, participants discussed their interpretations of the terms 'programmatic integration' and 'cross-cutting' in relation to integrating NTDs programmes. 11 criteria for integration were identified and weighed by participants. Using WHO Roadmap baseline values, pairwise disease combinations were assessed by multiplying criterion weightings with disease scores, generating a priority matrix. Workshop participants weighted community engagement and common vectors and transmission routes as the most important of the criteria. Three disease combinations with the highest potential for integration were identified, Dengue and Chikungunya, Taeniasis & Cysticercosis and Echinococcosis, and Trachoma and Lymphatic Filariasis. The workshop outcomes provide valuable insights into key factors for integrating NTD control programmes and highlight potential disease pairings for further exploration. While some disease matches were expected, others were less obvious. The highest-scoring combinations should now be further evaluated for integration potential.

Background: Antibiotic resistance increasingly threatens the interconnected health of humans, animals, and the environment. While misuse of antibiotics is a known driver, environmental factors also play a critical role. A balanced One Health approach—including the environmental sector—is necessary to understand the emergence and spread of resistance. Methods: We systematically searched English-language literature (1990–2021) in MEDLINE, Embase, and Web of Science, plus grey literature. Titles, abstracts, and keywords were screened, followed by full-text reviews using a structured codebook and dual-reviewer assessments. Results: Of 13,667 records screened, 738 met the inclusion criteria. Most studies focused on freshwater and terrestrial environments, particularly associated with wastewater or manure sources. Evidence of research has predominantly focused on Escherichia coli and Pseudomonas spp., with a concentration on ARGs conferring resistance to sulphonamides (sul1–3), tetracyclines (tet), and beta-lactams. Additionally, the People’s Republic of China has produced a third of the studies—twice that of the next country, the United States—and research was largely domestic, with closely linked author networks. Conclusion: Significant evidence gaps persist in understanding antibiotic resistance in non-built environments, particularly in marine, atmospheric, and non-agricultural set65 tings. Stressors such as climate change and microplastics remain notably under-explored. There is also an urgent need for more research in low-income regions, which face higher risks of antibiotic resistance, to support the development of targeted, evidence-based interventions.

Human toxocariasis is a worldwide parasitic disease caused by zoonotic roundworms of the genus Toxocara, which can cause blindness and epilepsy. The aim of this study was to estimate the risk of food-borne transmission of Toxocara spp. to humans in the UK by developing mathematical models created in a Bayesian framework. Parameter estimation was based on published experimental studies and field data from southern England, with qPCR Cq values used as a measure of eggs in spinach portions and ELISA optical density data as an indirect measure of larvae in meat portions. The average human risk of Toxocara spp. infection, per portion consumed, was estimated as 0.016% (95% CI: 0.000–0.100%) for unwashed leafy vegetables and 0.172% (95% CI: 0.000–0.400%) for undercooked meat. The average proportion of meat portions estimated positive for Toxocara spp. larvae was 0.841% (95% CI: 0.300–1.400%), compared to 0.036% (95% CI: 0.000–0.200%) of spinach portions containing larvated Toxocara spp. eggs. Overall, the models estimated a low risk of infection with Toxocara spp. by consuming these foods. However, given the potentially severe human health consequences of toxocariasis, intervention strategies to reduce environmental contamination with Toxocara spp. eggs and correct food preparation are advised.

Porcine reproductive and respiratory syndrome virus (PRRSV) poses a significant threat to pig health, particularly in multi-site industrial pig farming systems. These systems, which involve raising pigs at different locations based on their age and transporting them via trucks and trailers, may increase the risk of pathogen transmission through contaminated vehicles. Given the importance of preventing PRRSV infection, vehicle cleaning and disinfection (C&D) are crucial for disease control. This study examined data from four PRRSV outbreaks in U.S. sow farms during a regional emergence of a PRRSV2 variant in multi-site swine production systems, focusing on vehicle movements before and after the outbreaks and their C&D frequencies. This research analysed 1190 vehicle movement records between premises and 753 visits to truck wash stations, creating networks encompassing seven vehicles across 45 sites, including breeding, growing, and isolation facilities. Network simulations were used to evaluate the infection sources and virus transmission risks under various vehicle C&D frequencies during outbreaks. Results showed no significant changes in movement frequency 2 before and after the outbreak. The infection risk varied by farm type and specific connections to the outbreak farm, with higher risks observed in growing farms. Scenario comparisons to assess the impact of C&D frequencies on transmission showed that adherence to vehicle C&D protocols resulted in infection risks nearly matching optimal scenarios. The most substantial differences were observed when comparing infection probabilities in the pessimistic scenario to those in the realistic scenario, with probabilities being significantly higher in the pessimistic scenario, particularly for gilt isolation farms (7.6 % higher) and sow farms (6.6 % higher). These findings underscore the critical role of thorough cleaning and disinfection protocols in reducing infection risk. This research assesses the risks of infection introduction and spread during outbreaks, emphasising the critical role of truck washing in controlling PRRSV. The study provides quantitative evidence that consistent vehicle C&D practices significantly reduce transmission risks, offering valuable insights into disease transmission pathways. These findings inform targeted interventions to enhance biosecurity and strengthen disease prevention strategies.

Rabies is an important zoonotic disease responsible for 59,000 human deaths worldwide each year. More than a third of these deaths occur in Africa. The first step in controlling rabies is establishing the burden of disease through data analysis and investigating regional risk to help prioritise resources. Here, we evaluated the surveillance data collected over the last decade in Nigeria (2014–2023). A spatio-temporal model was developed using the NIMBLE (1.2.1) package in R to assess outbreak risk. Our analysis found a high risk of canine rabies outbreaks in Plateau state and its surrounding states, as well as increased trends of outbreaks from July to September. The high number of reported canine rabies outbreaks in the North Central region could be due to cross-border transmission or improved reporting in the area. However, this could be confounded by potential reporting bias, with 8 out of 37 states (21.6%) never reporting a single outbreak in the period studied. Improving surveillance efforts will highlight states and regions in need of prioritisation for vaccinations and post-exposure prophylaxis. Using a One Health approach will likely help improve reporting, such as through integrated bite-case management, creating a more sustainable solution for the epidemiology of rabies in Nigeria in the future.

The accelerating rate of global climate and environmental changes is expected to affect the distribution, frequency, and patterns of established infectious diseases, as well as the emergence and re-emergence of both new and known diseases. Salmonella is a leading cause of foodborne illnesses in Europe, accounting for nearly one in three foodborne outbreaks. The seasonal pattern observed in cases of human salmonellosis reported suggests that weather may be a relevant driver of disease. Many studies show associations of salmonellosis with weather factors, but the exact extent of this influence is still unclear. Elucidating how the disease depends on relevant weather factors provides insights into the underlying mechanisms of transmission and provides a tool to anticipate the risk when relevant weather factors are known. This study provides new insights into the relationship between weather factors and the occurrence of salmonellosis, addressing a crucial issue in the context of climate change. By utilizing long-term, high-resolution epidemiological data from England and Wales linked with local weather data, the study offers a comprehensive phenomenological description of specific weather conditions that are related the incidence of salmonellosis. Unlike previous studies that often rely on regression models or predefined parameterizations, the methodology used in this study employs a transparent and straightforward approach to estimate disease incidence based on a wide range of 14 local weather factors linked to individual cases. A key contribution of this study is its ability to account for the simultaneous effect of up to three weather factors, providing a more holistic understanding of their combined impact on disease incidence. Air temperature (>10⁰C), relative humidity, precipitation (dry conditions), dewpoint temperature (7-10⁰C), and day length (12-15h) were identified as key weather factors associated with salmonellosis, irrespective of geographical location. These findings were validated both in England and Wales and the Netherlands, which encourages the application of the model in other regions with different climatic and social characteristics to gain new insights on the incidence of salmonellosis. Likewise, the methodology can be adapted to explore other environmental factors, such as land use, proximity to animal farms, or socio-economic factors, providing a more holistic understanding of disease dynamics. The methodology used in this study, the conditional incidence, provides a robust framework to select key weather factors and exclude less relevant ones and to better understand climate-sensitive diseases and their response to climate changes. Early warning systems enhanced with weather data can improve incidence patterns predictions and tailor interventions to specific geographic areas.

As the complexity of health systems has increased over time, there is an urgent need for developing multi-sectoral and multi-disciplinary collaborations within the domain of One Health (OH). Despite the efforts to promote collaboration in health surveillance and overcome professional silos, implementing OH surveillance systems in practice remains challenging for multiple reasons. In this study, we describe the lessons learned from the evaluation of OH surveillance using OH-EpiCap (an online evaluation tool for One Health epidemiological surveillance capacities and capabilities), the challenges identified with the implementation of OH surveillance, and the main barriers that contribute to its sub-optimal functioning, as well as possible solutions to address them. We conducted eleven case studies targeting the multi-sectoral surveillance systems for antimicrobial resistance in Portugal and France, Salmonella in France, Germany, and the Netherlands, Listeria in The Netherlands, Finland and Norway, Campylobacter in Norway and Sweden, and psittacosis in Denmark. These evaluations facilitated the identification of common strengths and weaknesses, focusing on the organization and functioning of existing collaborations and their impacts on the surveillance system. Lack of operational and shared leadership, adherence to FAIR data principles, sharing of techniques, and harmonized indicators led to poor organization and sub-optimal functioning of OH surveillance systems. In the majority of studied systems, the effectiveness, operational costs, behavioral changes, and population health outcomes brought by the OH surveillance over traditional surveillance (i.e. compartmentalized into sectors) have not been evaluated. To this end, the establishment of a formal governance body with representatives from each sector could assist in overcoming long-standing barriers. Moreover, demonstrating the impacts of OH-ness of surveillance may facilitate the implementation of OH surveillance systems.

Although international health agencies encourage the development of One Health (OH) surveillance, many systems remain mostly compartmentalized, with limited collaborations among sectors and disciplines. In the framework of the OH European Joint Programme “MATRIX” project, a generic evaluation tool called OH-EpiCap has been developed to enable individual institutes/governments to characterize, assess and monitor their own OH epidemiological surveillance capacities and capabilities. The tool is organized around three dimensions: organization, operational activities, and impact of the OH surveillance system; each dimension is then divided into four targets, each including four indicators. A semi-quantitative questionnaire enables the scoring of each indicator, with four levels according to the degree of satisfaction in the studied OH surveillance system. The evaluation is conducted by a panel of surveillance representatives (during a half-day workshop or with a back-and-forth process to reach a consensus). An R Shiny-based web application facilitates implementation of the evaluation and visualization of the results, and includes a benchmarking option. The tool was piloted on several foodborne hazards (i.e., Salmonella, Campylobacter, Listeria ), emerging threats (e.g., antimicrobial resistance) and other zoonotic hazards (psittacosis) in multiple European countries in 2022. These case studies showed that the OH-EpiCap tool supports the tracing of strengths and weaknesses in epidemiological capacities and the identification of concrete and direct actions to improve collaborative activities at all steps of surveillance. It appears complementary to the existing EU-LabCap tool, designed to assess the capacity and capability of European microbiology laboratories. In addition, it provides opportunity to reinforce trust between surveillance stakeholders from across the system and to build a good foundation for a professional network for further collaboration.

The global 2030 goal set by the World Organization for Animal Health (WOAH), the World Health Organization (WHO), and the Food and Agriculture Organization (FAO), to eliminate dog-mediated human rabies deaths, has undeniably been a catalyst for many countries to re-assess existing dog rabies control programmes. Additionally, the 2030 agenda for Sustainable Development includes a blueprint for global targets which will benefit both people and secure the health of the planet. Rabies is acknowledged as a disease of poverty, but the connections between economic development and rabies control and elimination are poorly quantified yet, critical evidence for planning and prioritisation. We have developed multiple generalised linear models, to model the relationship between health care access, poverty, and death rate as a result of rabies, with separate indicators that can be used at country-level; total Gross Domestic Product (GDP), and current health expenditure as a percentage of the total gross domestic product (% GDP) as an indicator of economic growth; and a metric of poverty assessing the extent and intensity of deprivation experienced at the individual level (Multidimensional Poverty Index, MPI). Notably there was no detectable relationship between GDP or current health expenditure (% GDP) and death rate from rabies. However, MPI showed statistically significant relationships with per capita rabies deaths and the probability of receiving lifesaving post exposure prophylaxis. We highlight that those most at risk of not being treated, and dying due to rabies, live in communities experiencing health care inequalities, readily measured through poverty indicators. These data demonstrate that economic growth alone, may not be enough to meet the 2030 goal. Indeed, other strategies such as targeting vulnerable populations and responsible pet ownership are also needed in addition to economic investment.

The Middle East, Eastern Europe, Central Asia and North Africa Rabies Control Network (MERACON), is built upon the achievements of the Middle East and Eastern Europe Rabies Expert Bureau (MEEREB). MERACON aims to foster collaboration among Member States (MS) and develop shared regional objectives, building momentum towards dog-mediated rabies control and elimination. Here we assess the epidemiology of rabies and preparedness in twelve participating MS, using case and rabies capacity data for 2017, and compare our findings with previous published reports and a predictive burden model. Across MS, the number of reported cases of dog rabies per 100,000 dog population and the number of reported human deaths per 100,000 population as a result of dog-mediated rabies appeared weakly associated. Compared to 2014 there has been a decrease in the number of reported human cases in five of the twelve MS, three MS reported an increase, two MS continued to report zero cases, and the remaining two MS were not listed in the 2014 study and therefore no comparison could be drawn. Vaccination coverage in dogs has increased since 2014 in half (4/8) of the MS where data are available. Most importantly, it is evident that there is a need for improved data collection, sharing and reporting at both the national and international levels. With the formation of the MERACON network, MS will be able to align with international best practices, while also fostering international support with other MS and international organisations.

Lymphatic filariasis (LF) is a neglected tropical disease targeted for elimination as a public health problem by 2030. Although mass treatments have led to huge reductions in LF prevalence, some countries or regions may find it difficult to achieve elimination by 2030 owing to various factors, including local differences in transmission. Subnational projections of intervention impact are a useful tool in understanding these dynamics, but correctly characterizing their uncertainty is challenging. We developed a computationally feasible framework for providing subnational projections for LF across 44 sub-Saharan African countries using ensemble models, guided by historical control data, to allow assessment of the role of subnational heterogeneities in global goal achievement. Projected scenarios include ongoing annual treatment from 2018 to 2030, enhanced coverage, and biannual treatment. Our projections suggest that progress is likely to continue well. However, highly endemic locations currently deploying strategies with the lower World Health Organization recommended coverage (65%) and frequency (annual) are expected to have slow decreases in prevalence. Increasing intervention frequency or coverage can accelerate progress by up to 5 or 6 years, respectively. While projections based on baseline data have limitations, our methodological advancements provide assessments of potential bottlenecks for the global goals for LF arising from subnational heterogeneities. In particular, areas with high baseline prevalence may face challenges in achieving the 2030 goals, extending the "tail" of interventions. Enhancing intervention frequency and/or coverage will accelerate progress. Our approach facilitates preimplementation assessments of the impact of local interventions and is applicable to other regions and neglected tropical diseases.

The WHO aims to eliminate schistosomiasis as a public health problem by 2030. However, standard morbidity measures poorly correlate to infection intensities, hindering disease monitoring and evaluation. This is exacerbated by insufficient evidence on Schistosoma's impact on health-related quality of life (HRQoL). We conducted community-based cross-sectional surveys and parasitological examinations in moderate-to-high Schistosoma mansoni endemic communities in Uganda. We calculated parasitic infections and used EQ-5D instruments to estimate and compare HRQoL utilities in these populations. We further employed Tobit/linear regression models to predict HRQoL determinants. Two-thirds of the 560 participants were diagnosed with parasitic infection(s), 49% having S. mansoni. No significant negative association was observed between HRQoL and S. mansoni infection status/intensity. However, severity of pain urinating (beta = -0.106; s.e. = 0.043) and body swelling (beta = -0.326; s.e. = 0.005), increasing age (beta = -0.016; s.e. = 0.033), reduced socio-economic status (beta = 0.128; s.e. = 0.032), and being unemployed predicted lower HRQoL. Symptom severity and socio-economic status were better predictors of short-term HRQoL than current S. mansoni infection status/intensity. This is key to disentangling the link between infection(s) and short-term health outcomes, and highlights the complexity of correlating current infection(s) with long-term morbidity. Further evidence is needed on long-term schistosomiasis-associated HRQoL, health and economic outcomes to inform the case for upfront investments in schistosomiasis interventions.

Cystic Echinococcosis (CE) as a prevalent tapeworm infection of human and herbivorous animals worldwide, is caused by accidental ingestion of Echinococcus granulosus eggs excreted from infected dogs. CE is endemic in the Middle East and North Africa, and is considered as an important parasitic zoonosis in Iran. It is transmitted between dogs as the primary definitive host and different livestock species as the intermediate hosts. One of the most important measures for CE control is dog deworming with praziquantel. Due to the frequent reinfection of dogs, intensive deworming campaigns are critical for breaking CE transmission. Dog reinfection rate could be used as an indicator of the intensity of local CE transmission in endemic areas. However, our knowledge on the extent of reinfection in the endemic regions is poor. The purpose of the present study was to determine E. granulosus reinfection rate after praziquantel administration in a population of owned dogs in Kerman, Iran. A cohort of 150 owned dogs was recruited, with stool samples collected before praziquantel administration as a single oral dose of 5 mg/kg. The re-samplings of the owned dogs were performed at 2, 5 and 12 months following initial praziquantel administration. Stool samples were examined microscopically using Willis flotation method. Genomic DNA was extracted, and E. granulosus sensu lato-specific primers were used to PCR-amplify a 133-bp fragment of a repeat unit of the parasite genome. Survival analysis was performed using Kaplan-Meier method to calculate cumulative survival rates, which is used here to capture reinfection dynamics, and monthly incidence of infection, capturing also the spatial distribution of disease risk. Results of survival analysis showed 8, 12 and 17% total reinfection rates in 2, 5 and 12 months following initial praziquantel administration, respectively, indicating that 92, 88 and 83% of the dogs had no detectable infection in that same time periods. The monthly incidence of reinfection in total owned dog population was estimated at 1.5% (95% CI 1.0-2.1). The results showed that the prevalence of echinococcosis in owned dogs, using copro-PCR assay was 42.6%. However, using conventional microscopy, 8% of fecal samples were positive for taeniid eggs. Our results suggest that regular treatment of the dog population with praziquantel every 60 days is ideal, however the frequency of dog dosing faces major logistics and cost challenges, threatening the sustainability of control programs. Understanding the nature and extent of dog reinfection in the endemic areas is essential for successful implementation of control programs and understanding patterns of CE transmission. Cystic echinococcosis (CE), caused by the small tapeworm of dogs, Echinococcus granulosus, is considered as a prevalent zoonotic infection of human and livestock worldwide. Dogs play a crucial role in the parasite life cycle, serving as definitive hosts for the tapeworm and contributing to the transmission of the disease to humans and other livestock.Praziquantel (PZQ) dosing of dogs is proposed as one of the main elements of CE control programs. Frequent PZQ dosing is required because of reinfection of farm dogs following feeding offal to them and this presents major logistic and financial problems. The frequency of PZQ dosing is dependent on the rate of dog reinfection in each endemic region. We explored the significance of farm dogs reinfection in an endemic area in southeastern Iran, to provide better understanding of CE transmission and developing effective control programs.We monitored a cohort of 150 dogs previously treated for cystic echinococcosis by praziquantel. We showed that the prevalence of echinococcosis in the farm dogs, using PCR assay was 42.6% before praziquantel administration. To our surprise, a significant proportion of these dogs, approximately 17%, experienced reinfection with E. granulosus, 12 months following initial praziquantel administration. This finding was both alarming and informative. Our study emphasized the importance of responsible dog ownership behavior and proper disposal of livestock offal in endemic areas.

The role of transportation vehicles, pig movement between farms, proximity to infected premises, and feed deliveries has not been fully considered in the dissemination dynamics of porcine epidemic diarrhea virus (PEDV). This has limited efforts for disease control and elimination restricting the development of risk-based resource allocation to the most relevant modes of PEDV dissemination. Here, we modeled nine modes of between-farm transmission pathways including farm-to-farm proximity (local transmission), contact network of pig farm movements between sites, four different contact networks of transportation vehicles (vehicles that transport pigs from farm-to-farm, pigs to markets, feed distribution and crew), the volume of animal by-products within feed diets (e.g. animal fat and meat and bone meal) to reproduce PEDV transmission dynamics. The model was calibrated in space and time with weekly PEDV outbreaks. We investigated the model performance to identify outbreak locations and the contribution of each route in the dissemination of PEDV. The model estimated that 42.7% of the infections in sow farms were related to vehicles transporting feed, 34.5% of infected nurseries were associated with vehicles transporting pigs to farms, and for both farm types, pig movements or local transmission were the next most relevant routes. On the other hand, finishers were most often (31.4%) infected via local transmission, followed by the vehicles transporting feed and pigs to farm networks. Feed ingredients did not significantly improve model calibration metrics. The proposed modeling framework provides an evaluation of PEDV transmission dynamics, ranking the most important routes of PEDV dissemination and granting the swine industry valuable information to focus efforts and resources on the most important transmission routes.

Neglected tropical diseases (NTDs) largely impact marginalised communities living in tropical and subtropical regions. Mass drug administration is the leading intervention method for five NTDs; however, it is known that there is lack of access to treatment for some populations and demographic groups. It is also likely that those individuals without access to treatment are excluded from surveillance. It is important to consider the impacts of this on the overall success, and monitoring and evaluation (M & E) of intervention programmes. We use a detailed individual-based model of the infection dynamics of lymphatic filariasis to investigate the impact of excluded, untreated, and therefore unobserved groups on the true versus observed infection dynamics and subsequent intervention success. We simulate surveillance in four groups-the whole population eligible to receive treatment, the whole eligible population with access to treatment, the TAS focus of six- and seven-year-olds, and finally in >20-year-olds. We show that the surveillance group under observation has a significant impact on perceived dynamics. Exclusion to treatment and surveillance negatively impacts the probability of reaching public health goals, though in populations that do reach these goals there are no signals to indicate excluded groups. Increasingly restricted surveillance groups over-estimate the efficacy of MDA. The presence of non-treated groups cannot be inferred when surveillance is only occurring in the group receiving treatment.Author summaryMass drug administration (MDA) is the cornerstone of control for many neglected tropical diseases. As we move towards increasingly ambitious public heath targets, it is critical to investigate ways in which MDA weaknesses can be strengthened. It is known that some individuals systematically choose not to participate in treatment. It is also becoming evident that others systematically do not have access to treatment. What is less clear however, is how access to treatment correlates to inclusion in surveillance efforts, and in turn, how this impacts the monitoring and evaluation of intervention programmes. If individuals with access to treatment are more likely to be included in surveillance efforts, then this implies that those without access to treatment are likewise more likely to be excluded from surveillance. Extending the individual-based lymphatic filariasis model, TRANSFIL, we show that exclusion to treatment and surveillance negatively impacts the probability of reaching public health goals, though in populations that do reach these goals there are no signals to indicate excluded groups. Increasingly restricted surveillance groups over-estimate the efficacy of MDA. The presence of non-treated groups cannot be inferred when surveillance is only occurring in the group receiving treatment.

Lymphatic filariasis (LF) is a debilitating, poverty-promoting, neglected tropical disease (NTD) targeted for worldwide elimination as a public health problem (EPHP) by 2030. Evaluating progress towards this target for national programmes is challenging, due to differences in disease transmission and interventions at the subnational level. Mathematical models can help address these challenges by capturing spatial heterogeneities and evaluating progress towards LF elimination and how different interventions could be leveraged to achieve elimination by 2030. Here we used a novel approach to combine historical geo-spatial disease prevalence maps of LF in Ethiopia with 3 contemporary disease transmission models to project trends in infection under different intervention scenarios at subnational level. Our findings show that local context, particularly the coverage of interventions, is an important determinant for the success of control and elimination programmes. Furthermore, although current strategies seem sufficient to achieve LF elimination by 2030, some areas may benefit from the implementation of alternative strategies, such as using enhanced coverage or increased frequency, to accelerate progress towards the 2030 targets. The combination of geospatial disease prevalence maps of LF with transmission models and intervention histories enables the projection of trends in infection at the subnational level under different control scenarios in Ethiopia. This approach, which adapts transmission models to local settings, may be useful to inform the design of optimal interventions at the subnational level in other LF endemic regions.

Parasitic neglected tropical diseases (NTDs) or 'infectious diseases of poverty' continue to affect the poorest communities in the world, including in the Philippines. Socio-economic conditions contribute to persisting endemicity of these infectious diseases. As such, examining these underlying factors may help identify gaps in implementation of control programs. This study aimed to determine the prevalence of schistosomiasis and soil-transmitted helminthiasis (STH) and investigate the role of socio-economic and risk factors in the persistence of these diseases in endemic communities in the Philippines. This cross-sectional study involving a total of 1,152 individuals from 386 randomly-selected households was conducted in eight municipalities in Mindanao, the Philippines. Participants were asked to submit fecal samples which were processed using the Kato-Katz technique to check for intestinal helminthiases. Moreover, each household head participated in a questionnaire survey investigating household conditions and knowledge, attitude, and practices related to intestinal helminthiases. Associations between questionnaire responses and intestinal helminth infection were assessed. Results demonstrated an overall schistosomiasis prevalence of 5.7% and soil-transmitted helminthiasis prevalence of 18.8% in the study population. Further, the household questionnaire revealed high awareness of intestinal helminthiases, but lower understanding of routes of transmission. Potentially risky behaviors such as walking outside barefoot and bathing in rivers were common. There was a strong association between municipality and prevalence of helminth infection. Educational attainment and higher "practice" scores (relating to practices which are effective in controlling intestinal helminths) were inversely associated with soil-transmitted helminth infection. Results of the study showed remaining high endemicity of intestinal helminthiases in the area despite ongoing control programs. Poor socio-economic conditions and low awareness about how intestinal helminthiases are transmitted may be among the factors hindering success of intestinal helminth control programs in the provinces of Agusan del Sur and Surigao del Norte. Addressing these sustainability gaps could contribute to the success of alleviating the burden of intestinal helminthiases in endemic areas.

As the complexity of health systems has increased over time, there is an urgent need for developing multi-sectoral and multidisciplinary collaborations within the domain of One Health (OH) (1). Despite the efforts to promote such collaborations and break through discipline silos, implementing OH surveillance in practice remains difficult. Thus, it is key to identify the main challenges and barriers for the effective implementation and functioning of an integrated OH surveillance system (2).

Accurate epidemiological classification guidelines are essential to ensure implementation of adequate public health and social measures. Here, we investigate two frameworks, published in March 2020 and November 2020 by the World Health Organization (WHO) to categorise transmission risks of COVID-19 infection, and assess how well the countries' self-reported classification tracked their underlying epidemiological situation. We used three modelling approaches: an ordinal longitudinal model, a proportional odds model and a machine learning One-Rule classification algorithm. We applied these models to 202 countries' daily transmission classification and epidemiological data, and study classification accuracy over time for the period April 2020 to June 2021, when WHO stopped publishing country classifications. Overall, the first published WHO classification, purely qualitative, lacked accuracy. The incidence rate within the previous 14 days was the best predictor with an average accuracy throughout the period of study of 61.5%. However, when each week was assessed independently, the models returned predictive accuracies above 50% only in the first weeks of April 2020. In contrast, the second classification, quantitative in nature, increased significantly the accuracy of transmission labels, with values as high as 94%.

Background Human, animal, and environmental health are increasingly threatened by the emergence and spread of antibiotic resistance. Inappropriate use of antibiotic treatments commonly contributes to this threat, but it is also becoming apparent that multiple, interconnected environmental factors can play a significant role. Thus, a One Health approach is required for a comprehensive understanding of the environmental dimensions of antibiotic resistance and inform science-based decisions and actions. The broad and multidisciplinary nature of the problem poses several open questions drawing upon a wide heterogeneous range of studies. Objective This study seeks to collect and catalogue the evidence of the potential effects of environmental factors on the abundance or detection of antibiotic resistance determinants in the outdoor environment, i.e., antibiotic resistant bacteria and mobile genetic elements carrying antibiotic resistance genes, and the effect on those caused by local environmental conditions of either natural or anthropogenic origin. Methods Here, we describe the protocol for a systematic evidence map to address this, which will be performed in adherence to best practice guidelines. We will search the literature from 1990 to present, using the following electronic databases: MEDLINE, Embase, and the Web of Science Core Collection as well as the grey literature. We shall include full-text, scientific articles published in English. Reviewers will work in pairs to screen title, abstract and keywords first and then full-text documents. Data extraction will adhere to a code book purposely designed. Risk of bias assessment will not be conducted as part of this SEM. We will combine tables, graphs, and other suitable visualisation techniques to compile a database i) of studies investigating the factors associated with the prevalence of antibiotic resistance in the environment and ii) map the distribution, network, cross-disciplinarity, impact and trends in the literature.

Toxocara canis and Toxocara cati are globally distributed, zoonotic roundworm parasites. Human infection can have serious clinical consequences including blindness and brain disorders. In addition to ingesting environmental eggs, humans can become infected by eating infective larvae in raw or undercooked meat products. To date, no studies have assessed the prevalence of Toxocara spp. larvae in meat from animals consumed as food in the UK or assessed tissue exudates for the presence of anti-Toxocara antibodies. This study aimed to assess the potential risk to consumers eating meat products from animals infected with Toxocara spp. Tissue samples (226) were obtained from 155 different food producing animals in the south, southwest and east of England, UK. Tissue samples (n=226), either muscle or liver, were processed by artificial digestion followed by microscopic sediment evaluation for Toxocara spp. larvae, and tissue exudate samples (n=141) were tested for the presence of anti-Toxocara antibodies using a commercial ELISA kit. A logistic regression model was used to compare anti-Toxocara antibody prevalence by host species, tissue type and source. While no larvae were found by microscopic examination after tissue digestion, the overall prevalence of anti-Toxocara antibodies in tissue exudates was 27.7%. By species, 35.3% of cattle (n=34), 15.0% of sheep (n=60), 54.6% of goats (n=11) and 61.1% of pigs (n=18) had anti-Toxocara antibodies. Logistic regression analysis found pigs were more likely to be positive for anti-Toxocara antibodies (odds ration (OR) = 2.89, P=0.0786) compared with the other species sampled but only at a 10% significance level. The high prevalence of anti-Toxocara antibodies in tissue exudates suggests that exposure of food animals to this parasite is common in England. Tissue exudate serology on meat products within the human food chain could be applied in support of food safety and to identify practices that increase risks of foodborne transmission of zoonotic toxocariasis.

Abstract for the DC conference

Abstract Background The COVID-19 pandemic has disrupted planned annual antibiotic mass drug administration (MDA) activities that have formed the cornerstone of the largely successful global efforts to eliminate trachoma as a public health problem. Methods Using a mathematical model we investigate the impact of interruption to MDA in trachoma-endemic settings. We evaluate potential measures to mitigate this impact and consider alternative strategies for accelerating progress in those areas where the trachoma elimination targets may not be achievable otherwise. Results We demonstrate that for districts that were hyperendemic at baseline, or where the trachoma elimination thresholds have not already been achieved after three rounds of MDA, the interruption to planned MDA could lead to a delay to reaching elimination targets greater than the duration of interruption. We also show that an additional round of MDA in the year following MDA resumption could effectively mitigate this delay. For districts where the probability of elimination under annual MDA was already very low, we demonstrate that more intensive MDA schedules are needed to achieve agreed targets. Conclusion Through appropriate use of additional MDA, the impact of COVID-19 in terms of delay to reaching trachoma elimination targets can be effectively mitigated. Additionally, more frequent MDA may accelerate progress towards 2030 goals.

This study assessed the elemental status of cross-bred dairy cows in small holder farms in Sri Lanka, with the aim to establish the elemental baseline and identify possible deficiencies. For this purpose, 458 milk, hair, serum and whole blood samples were collected from 120 cows in four regions of Northern and Northwestern Sri Lanka, (namely Vavaniya, Mannar, Jaffna and Kurunegala). Farmers also provided a total of 257 samples of feed, which included local fodder as well as 79 supplement materials. The concentrations of As, Ca, Cd, Co, Cr, Cu, Fe, I, K, Mg, Mn, Mo, Na, Ni, Pb, Se, V and Zn were determined by inductively coupled plasma mass spectrometry (ICP-MS). Evaluation of the data revealed that all cows in this study could be considered deficient in I and Co (18.6-78.5 mu g L-1 I and 0.06-0.65 mu g L-1 Co, in blood serum) when compared with deficiency upper boundary levels of 0.70 mu g L-1 Co and 50 mu g L-1 I. Poor correlations were found between the composition of milk or blood with hair, which suggests that hair is not a good indicator of mineral status. Most local fodders meet dietary requirements, with Sarana grass offering the greatest nutritional profile. Principal component analysis (PCA) was used to assess differences in the elemental composition of the diverse types of feed, as well as regional variability, revealing clear differences between forage, concentrates and nutritional supplements, with the latter showing higher concentrations of non-essential or even toxic elements, such as Cd and Pb.

Abstract Background A large number of studies have assessed risk factors for infection with soil-transmitted helminths (STH), but few have investigated the interactions between the different parasites or compared these between host species across hosts. Here, we assessed the associations between Ascaris, Trichuris, hookworm, strongyle and Toxocara infections in the Philippines in human and animal hosts. Methods Faecal samples were collected from humans and animals (dogs, cats and pigs) in 252 households from four villages in southern Philippines and intestinal helminth infections were assessed by microscopy. Associations between worm species were assessed using multiple logistic regression. Results Ascaris infections showed a similar prevalence in humans (13.9%) and pigs (13.7%). Hookworm was the most prevalent infection in dogs (48%); the most prevalent infection in pigs was strongyles (42%). The prevalences of hookworm and Toxocara in cats were similar (41%). Statistically significant associations were observed between Ascaris and Trichuris and between Ascaris and hookworm infections in humans, and also between Ascaris and Trichuris infections in pigs. Dual and triple infections were observed, which were more common in dogs, cats and pigs than in humans. Conclusions Associations are likely to exist between STH species in humans and animals, possibly due to shared exposures and transmission routes. Individual factors and behaviours will play a key role in the occurrence of co-infections, which will have effects on disease severity. Moreover, the implications of co-infection for the emergence of zoonoses need to be explored further.

Existing action recognition methods are typically actor-specific due to the intrinsic topological and apparent differences among the actors. This requires actor-specific pose estimation (e.g., humans vs. animals), leading to cumbersome model design complexity and high maintenance costs. Moreover, they often focus on learning the visual modality alone and single-label classification whilst neglecting other available information sources (e.g., class name text) and the concurrent occurrence of multiple actions. To overcome these limitations, we propose a new approach called 'actor-agnostic multi-modal multi-label action recognition,' which offers a unified solution for various types of actors, including humans and animals. We further formulate a novel Multi-modal Semantic Query Network (MSQNet) model in a transformer-based object detection framework (e.g., DETR), characterized by leveraging visual and textual modalities to represent the action classes better. The elimination of actor-specific model designs is a key advantage, as it removes the need for actor pose estimation altogether. Extensive experiments on five publicly available benchmarks show that our MSQNet consistently outperforms the prior arts of actor-specific alternatives on human and animal single- and multi-label action recognition tasks by up to 50%. Code will be released at https://github.com/mondalanindya/MSQNet.

Existing action recognition methods are typically actor-specific due to the intrinsic topological and apparent differences among the actors. This requires actor-specific pose estimation (e.g., humans vs. animals), leading to cumbersome model design complexity and high maintenance costs. Moreover, they often focus on learning the visual modality alone and single-label classification whilst neglecting other available information sources (e.g., class name text) and the concurrent occurrence of multiple actions. To overcome these limitations, we propose a new approach called 'actor-agnostic multi-modal multi-label action recognition,' which offers a unified solution for various types of actors, including humans and animals. We further formulate a novel Multi-modal Semantic Query Network (MSQNet) model in a transformer-based object detection framework (e.g., DETR), characterized by leveraging visual and textual modalities to represent the action classes better. The elimination of actor-specific model designs is a key advantage, as it removes the need for actor pose estimation altogether. Extensive experiments on five publicly available benchmarks show that our MSQNet consistently outperforms the prior arts of actor-specific alternatives on human and animal single- and multi-label action recognition tasks by up to 50%. Code is made available at https://github.com/mondalanindya/MSQNet.

Trachoma is a neglected tropical disease and the leading infectious cause of blindness worldwide. The current World Health Organization goal for trachoma is elimination as a public health problem, defined as reaching a prevalence of trachomatous inflammation-follicular below 5% in children (1-9 years) and a prevalence of trachomatous trichiasis in adults below 0.2%. Current targets to achieve elimination were set to 2020 but are being extended to 2030. Mathematical and statistical models suggest that 2030 is a realistic timeline for elimination as a public health problem in most trachoma endemic areas. Although the goal can be achieved, it is important to develop appropriate monitoring tools for surveillance after having achieved the elimination target to check for the possibility of resurgence. For this purpose, a standardized serological approach or the use of multiple diagnostics in complement would likely be required.

The increasing overlap of resources between human and long-tailed macaque ( ) (LTM) populations have escalated human-primate conflict. In Malaysia, LTMs are labeled as a 'pest' species due to the macaques' opportunistic nature. This study investigates the activity budget of LTMs in an urban tourism site and how human activities influence it. Observational data were collected from LTMs daily for a period of four months. The observed behaviors were compared across differing levels of human interaction, between different times of day, and between high, medium, and low human traffic zones. LTMs exhibited varying ecological behavior patterns when observed across zones of differing human traffic, e.g., higher inactivity when human presence is high. More concerning is the impact on these animals' welfare and group dynamics as the increase in interactions with humans takes place; we noted increased inactivity and reduced intra-group interaction. This study highlights the connection that LTMs make between human activity and sources of anthropogenic food. Only through understanding LTM interaction can the cause for human-primate conflict be better understood, and thus, more sustainable mitigation strategies can be generated.

Visceral leishmaniasis (VL) is a neglected tropical disease (NTD) caused by Leishmania protozoa that are transmitted by female sand flies. On the Indian subcontinent (ISC), VL is targeted by the World Health Organization (WHO) for elimination as a public health problem by 2020, which is defined as

A limited understanding of the transmission dynamics of swine disease is a significant obstacle to prevent and control disease spread. Therefore, understanding between-farm transmission dynamics is crucial to developing disease forecasting systems to predict outbreaks that would allow the swine industry to tailor control strategies. Our objective was to forecast weekly porcine epidemic diarrhoea virus (PEDV) outbreaks by generating maps to identify current and future PEDV high-risk areas, and simulating the impact of control measures. Three epidemiological transmission models were developed and compared: a novel epidemiological modelling framework was developed specifically to model disease spread in swine populations, PigSpread, and two models built on previously developed ecosystems, SimInf (a stochastic disease spread simulations) and PoPS (Pest or Pathogen Spread). The models were calibrated on true weekly PEDV outbreaks from three spatially related swine production companies. Prediction accuracy across models was compared using the receiver operating characteristic area under the curve (AUC). Model outputs had a general agreement with observed outbreaks throughout the study period. PoPS had an AUC of 0.80, followed by PigSpread with 0.71, and SimInf had the lowest at 0.59. Our analysis estimates that the combined strategies of herd closure, controlled exposure of gilts to live viruses (feedback) and on-farm biosecurity reinforcement reduced the number of outbreaks. On average, 76% to 89% reduction was seen in sow farms, while in gilt development units (GDU) was between 33% to 61% when deployed to sow and GDU farms located in probabilistic high-risk areas. Our multi-model forecasting approach can be used to prioritize surveillance and intervention strategies for PEDV and other diseases potentially leading to more resilient and healthier pig production systems.

Cystic echinococcosis (CE) is a zoonotic disease of global relevance that leads to significant morbidity and economic losses in the livestock industry. Effective intervention and surveillance strategies are essential and available for controlling and preventing the spread of this disease. As a Neglected Tropical Disease (NTD), one of the main obstacles of control and surveillance is resource constraints and lack of sustainable investments. The Modelling Approaches for Cystic Echinococcosis (MACE) project aims to address these issues by utilizing an interdisciplinary approach that combines spatial analysis, individual-based transmission modelling, and stakeholder elicitation techniques.

Objective: Cystic echinococcosis (CE) is a parasitic zoonosis caused by Echinococcus granulosus sensu lato. Immunodiagnostic techniques such as Western blot (WB) or enzyme-linked immunosorbent assay (ELISA), with different antigens, can be applied to the diagnosis of sheep for epidemiological surveillance purposes in control programs. However, its use is limited by the existence of antigenic cross-reactivity between different species of taeniidae present in sheep. Therefore, the usefulness of establishing surveillance systems based on the identification of infection present in a livestock establishment, known as the (Epidemiological) Implementation Unit (IU), needs to be evaluated. Materials and Methods: A new ELISA diagnostic technique has been recently developed and validated using the recombinant EgAgB8/2 antigen for the detection of antibodies against E. granulosus. To determine detection of infection at the IU level using information from this diagnostic technique, simulations were carried out to evaluate the sample size required to classify IUs as likely infected, using outputs from a recently developed Bayesian latent class analysis model. Results: Relatively small samples sizes (between 14-29) are sufficient to achieve a high probability of detection (above 80%), across a range of prevalence, with the recently recommended Optical Density cut-off value for this novel ELISA (0.496), which optimizes diagnostic sensitivity and specificity. Conclusions: This diagnostic technique could be potentially used to identify the prevalence of infection in an area under control, measured as the percentage of IUs with the presence of infected sheep (infection present), or to individually identify the IU with ongoing transmission, given the presence of infected lambs, on which control measures should be intensified.

Many aspects of the porcine reproductive and respiratory syndrome virus (PRRSV) between‐farm transmission dynamics have been investigated, but uncertainty remains about the significance of farm type and different transmission routes on PRRSV spread. We developed a stochastic epidemiological model calibrated on weekly PRRSV outbreaks accounting for the population dynamics in different pig production phases, breeding herds, gilt development units, nurseries and finisher farms, of three hog producer companies. Our model accounted for indirect contacts by the close distance between farms (local transmission), between‐farm animal movements (pig flow) and reinfection of sow farms (re‐break). The fitted model was used to examine the effectiveness of vaccination strategies and complementary interventions such as enhanced PRRSV detection and vaccination delays and forecast the spatial distribution of PRRSV outbreak. The results of our analysis indicated that for sow farms, 59% of the simulated infections were related to local transmission (e.g. airborne, feed deliveries, shared equipment) whereas 36% and 5% were related to animal movements and re‐break, respectively. For nursery farms, 80% of infections were related to animal movements and 20% to local transmission; while at finisher farms, it was split between local transmission and animal movements. Assuming that the current vaccines are 1% effective in mitigating between‐farm PRRSV transmission, weaned pigs vaccination would reduce the incidence of PRRSV outbreaks by 3%, indeed under any scenario vaccination alone was insufficient for completely controlling PRRSV spread. Our results also showed that intensifying PRRSV detection and/or vaccination pigs at placement increased the effectiveness of all simulated vaccination strategies. Our model reproduced the incidence and PRRSV spatial distribution; therefore, this model could also be used to map current and future farms at‐risk. Finally, this model could be a useful tool for veterinarians, allowing them to identify the effect of transmission routes and different vaccination interventions to control PRRSV spread.

Over 240 million people are infected with schistosomiasis. Detecting Schistosoma mansoni eggs in stool using Kato–Katz thick smears (Kato-Katzs) is highly specific but lacks sensitivity. The urine-based point-of-care circulating cathodic antigen test (POC-CCA) has higher sensitivity, but issues include specificity, discrepancy between batches and interpretation of trace results. A semi-quantitative G-score and latent class analyses making no assumptions about trace readings have helped address some of these issues. However, intra-sample and inter-sample variation remains unknown for POC-CCAs. We collected 3 days of stool and urine from 349 and 621 participants, from high- and moderate-endemicity areas, respectively. We performed duplicate Kato-Katzs and one POC-CCA per sample. In the high-endemicity community, we also performed three POC-CCA technical replicates on one urine sample per participant. Latent class analysis was performed to estimate the relative contribution of intra- (test technical reproducibility) and inter-sample (day-to-day) variation on sensitivity and specificity. Within-sample variation for Kato-Katzs was higher than between-sample, with the opposite true for POC-CCAs. A POC-CCA G3 threshold most accurately assesses individual infections. However, to reach the WHO target product profile of the required 95% specificity for prevalence and monitoring and evaluation, a threshold of G4 is needed, but at the cost of reducing sensitivity. This article is part of the theme issue ‘Challenges and opportunities in the fight against neglected tropical diseases: a decade from the London Declaration on NTDs’.

Mass drug administration (MDA) is the main strategy towards lymphatic filariasis (LF) elimination. Progress is monitored by assessing microfilaraemia (Mf) or circulating filarial antigenaemia (CFA) prevalence, the latter being more practical for field surveys. The current criterion for stopping MDA requires 95% positive predictive value) thresholds for stopping MDA. The model captured trends in Mf and CFA prevalences reasonably well. Elimination cannot be predicted with sufficient certainty from CFA prevalence in 6-7-year olds. Resurgence may still occur if all children are antigen-negative, irrespective of the number tested. Mf-based criteria also show unfavourable results (PPV

Fasciolosis (Fasciola hepatica) and paramphistomosis (Calicophoron daubneyi) are two important infections of livestock. Calicophoron daubneyi is the predominant Paramphistomidae species in Europe, and its prevalence has increased in the last 10–15 years. In Italy, evidence suggests that the prevalence of F. hepatica in ruminants is low in the southern part, but C. daubneyi has been recently reported at high prevalence in the same area. Given the importance of reliable tools for liver and rumen fluke diagnosis in ruminants, this study evaluated the diagnostic performance of the Mini-FLOTAC (MF), Flukefinder® (FF) and sedimentation (SED) techniques to detect and quantify F. hepatica and C. daubneyi eggs using spiked and naturally infected cattle faecal samples.

The accuracy of screening tests for detecting cystic echinococcosis (CE) in livestock depends on characteristics of the host–parasite interaction and the extent of serological cross-reactivity with other taeniid species. The AgB8 kDa protein is considered to be the most specific native or recombinant antigen for immunodiagnosis of ovine CE. A particular DNA fragment coding for rAgB8/2 was identified, that provides evidence of specific reaction in the serodiagnosis of metacestode infection. We developed and validated an IgG Enzyme Linked Immunosorbent Assay (ELISA) test using a recombinant antigen B sub-unit EgAgB8/2 (rAgB8/2) of Echinoccocus granulosus sensu lato (s.l.) to estimate CE prevalence in sheep. A 273 bp DNA fragment coding for rAgB8/2 was expressed as a fusion protein (∼30 kDa) and purified by affinity chromatography. Evaluation of the analytical and diagnostic performance of the ELISA followed the World Organisation for Animal Health (OIE) manual, including implementation of serum panels from: uninfected lambs (n = 79); experimentally infected (with 2,000 E. granulosus s.l. eggs each) sheep with subsequent evidence of E. granulosus cysts by necropsy (n = 36), and animals carrying other metacestode/trematode infections (n = 20). The latter were used to assess the cross-reactivity of rAgB8/2, with these animals being naturally infected with Taenia hydatigena, Thysanosoma actinioides and/or Fasciola hepatica. EgAgB8/2 showed cross-reaction with only one serum sample from a sheep infected with Ta. hydatigena out of the 20 animals tested. Furthermore, the kinetics of the humoral response over time in five 6-month old sheep, each experimentally infected with 2,000 E. granulosus s.l. eggs, was evaluated up to 49 weeks (approximately one year) post infection (n = 5). The earliest detectable IgG response against rAgB8/2 was observed in sera from two and four sheep, 7 and 14 days after experimental infection, respectively. The highest immune response across all five animals was found 16 to 24 weeks post infection.

Reducing the morbidities caused by neglected tropical diseases (NTDs) is a central aim of ongoing disease control programmes. The broad spectrum of pathogens under the umbrella of NTDs lead to a range of negative health outcomes, from malnutrition and anaemia to organ failure, blindness and carcinogenesis. For some NTDs, the most severe clinical manifestations develop over many years of chronic or repeated infection. For these diseases, the association between infection and risk of long-term pathology is generally complex, and the impact of multiple interacting factors, such as age, co-morbidities and host immune response, is often poorly quantified. Mathematical modelling has been used for many years to gain insights into the complex processes underlying the transmission dynamics of infectious diseases; however, long-term morbidities associated with chronic or cumulative exposure are generally not incorporated into dynamic models for NTDs. Here we consider the complexities and challenges for determining the relationship between cumulative pathogen exposure and morbidity at the individual and population levels, drawing on case studies for trachoma, schistosomiasis and foodborne trematodiasis. We explore potential frameworks for explicitly incorporating long-term morbidity into NTD transmission models, and consider the insights such frameworks may bring in terms of policy-relevant projections for the elimination era. This article is part of the theme issue ‘Challenges and opportunities in the fight against neglected tropical diseases: a decade from the London Declaration on NTDs’.

Background: Co-infection with multiple soil-transmitted helminth (STH) species is common in communities with a high STH prevalence. The life histories of STH species share important characteristics, particularly in the gut, and there is the potential for interaction, but evidence on whether interactions may be facilitating or antagonistic are limited. Methods: Data from a pretreatment cross-sectional survey of STH egg deposition in a tea plantation community in Sri Lanka were analysed to evaluate patterns of co-infection and changes in egg deposition. Results: There were positive associations between Trichuris trichiura (whipworm) and both Necator americanus (hookworm) and Ascaris lumbricoides (roundworm), but N. americanus and Ascaris were not associated. N. americanus and Ascaris infections had lower egg depositions when they were in single infections than when they were co-infecting. There was no clear evidence of a similar effect of co-infection in Trichuris egg deposition. Conclusions: Associations in prevalence and egg deposition in STH species may vary, possibly indicating that effects of co-infection are species dependent. We suggest that between-species interactions that differ by species could explain these results, but further research in different populations is needed to support this theory. © The Author(s) 2018. Published by Oxford University Press on behalf of Royal Society of Tropical Medicine and Hygiene.

Defense against infection incurs costs as well as benefits that are expected to shape the evolution of optimal defense strategies. In particular, many theoretical studies have investigated contexts favoring constitutive versus inducible defenses. However, even when one immune strategy is theoretically optimal, it may be evolutionarily unachievable. This is because evolution proceeds via mutational changes to the protein interaction networks underlying immune responses, not by changes to an immune strategy directly. Here, we use a theoretical simulation model to examine how underlying network architectures constrain the evolution of immune strategies, and how these network architectures account for desirable immune properties such as inducibility and robustness. We focus on immune signaling because signaling molecules are common targets of parasitic interference but are rarely studied in this context. We find that in the presence of a coevolving parasite that disrupts immune signaling, hosts evolve constitutive defenses even when inducible defenses are theoretically optimal. This occurs for two reasons. First, there are relatively few network architectures that produce immunity that is both inducible and also robust against targeted disruption. Second, evolution toward these few robust inducible network architectures often requires intermediate steps that are vulnerable to targeted disruption. The few networks that are both robust and inducible consist of many parallel pathways of immune signaling with few connections among them. In the context of relevant empirical literature, we discuss whether this is indeed the most evolutionarily accessible robust inducible network architecture in nature, and when it can evolve.

Vampire bat-transmitted rabies has recently become the leading cause of rabies mortality in both humans and livestock in Latin America. Evaluating risk of transmission from bats to other animal species has thus become a priority in the region. An integrated bat-rabies dynamic modeling framework quantifying spillover risk to cattle farms was developed. The model is spatially explicit and is calibrated to the state of São Paulo, using real roost and farm locations. Roost and farm characteristics, as well as environmental data through an ecological niche model, are used to modulate rabies transmission. Interventions aimed at reducing risk in roosts (such as bat culling or vaccination) and in farms (cattle vaccination) were considered as control strategies. Both interventions significantly reduce the number of outbreaks in farms and disease spread (based on distance from source), with control in roosts being a significantly better intervention. High-risk areas were also identified, which can support ongoing programs, leading to more effective control interventions.

Simple Summary Echinococcosis is a zoonotic disease relevant to public health in many countries. The disease is present in Brazil; however, it is often underreported due to the lack of mandatory notification of cases across all Brazilian states. The records of two national databases were accessed during the period of 1995-2016 to describe the registered cases and deaths from echinococcosis in the country. Demographic, epidemiological, and health care data related to the occurrence of disease, and deaths attributed to echinococcosis are described. During the study period, 7955 hospitalizations were recorded due to echinococcosis, with 185 deaths. In a second database recording just mortality, a further 113 deaths were documented. Deaths were observed in every state of Brazil. When comparing between states, there was great variability in mortality rates, possibly indicating differences in the quality of health care received by patients and reinforcing the need to expand the compulsory notification of the disease across the country. Echinococcosis is a zoonotic disease relevant to public health in many countries, on all continents except Antarctica. The objective of the study is to describe the registered cases and mortality from echinococcosis in Brazil, from 1995 to 2016. The records of two national databases, the Hospital Information System (HIS) and the Mortality Information System (MIS), were accessed during the period of 1995-2016. Demographic, epidemiological, and health care data related to the occurrence of disease and deaths attributed to echinococcosis in Brazil are described. The results showed that 7955 records of hospitalizations were documented in the HIS, during the study period, with 185 deaths from echinococcosis, and 113 records of deaths were documented in the MIS Deaths in every state of Brazil in the period. When comparing between states, the HIS showed great variability in mortality rates, possibly indicating heterogeneity in diagnosis and in the quality of health care received by patients. Less severe cases that do not require specialized care are not recorded by the information systems, thus the true burden of the disease could be underrepresented in the country. A change in the coding of disease records in the HIS in the late 1990s, (the integration of echinococcosis cases with other pathologies), led to the loss of specificity of the records. The records showed a wide geographic distribution of deaths from echinococcosis, reinforcing the need to expand the notification of the disease in Brazil. Currently, notification of cases is compulsory in the state of Rio Grande do Sul.